In my last blog post, I provided a script that queried the XML for the resource monitor ring buffer entries in sys.dm_os_ring_buffers to identify when the system was under memory pressure as a result of not having the ‘max server memory’ sp_configure option set correctly for the server. Since that post has gone live, I’ve gotten a number of emails asking about various ‘max server memory’ configurations and how I might interpret different memory configurations, performance counter values, DMV outputs, etc. One email, from a Microsoft employee named Cristopher Benge, asked about filtering the resource monitor ring buffer entries to exclude notifications generated from inside of the SQL Server process, which wasn’t something I remembered being shown, but I wrote the original script for parsing the XML years ago so I decided it was worth a little more investigation.

In looking into this on SQL Server 2008 R2 SP1, I found that there are two XML nodes that identify whether the entry was the result of process memory pressure, for example the plan cache consuming to many stolen pages from the buffer pool, or system memory pressure from outside of SQL Server. This was blogged about by Bob Dorr a couple of years ago, How it Works: What are the RING_BUFFER_RESOURCE_MONITOR telling me? To play around with this, I used a couple of the workload generators I’ve written in C# for the Memory Performance Tuning module of our IE2: Performance Tuning Immersion Events and started creating internal and external memory problems for SQL Server in one of my lab VMs where I had complete control over the activity being generated.

The first test that I ran was a plan cache pollution application I wrote that churns through gigabytes of plan cache with single use plans against any copy of AdventureWorks to show cache trimming occurring. To perform the tests I added two columns to the XML output for the IndicatorsProcess and IndicatorsSystem elements from the XML:

–System Memory Usage

SELECT

EventTime,

record.value('(/Record/ResourceMonitor/Notification)[1]', 'varchar(max)') as [Type],

record.value('(/Record/ResourceMonitor/IndicatorsProcess)[1]', 'int') as [IndicatorsProcess],

record.value('(/Record/ResourceMonitor/IndicatorsSystem)[1]', 'int') as [IndicatorsSystem],

record.value('(/Record/MemoryRecord/AvailablePhysicalMemory)[1]', 'bigint') AS [Avail Phys Mem, Kb],

record.value('(/Record/MemoryRecord/AvailableVirtualAddressSpace)[1]', 'bigint') AS [Avail VAS, Kb]

FROM (

SELECT

DATEADD (ss, (-1 * ((cpu_ticks / CONVERT (float, ( cpu_ticks / ms_ticks ))) – [timestamp])/1000), GETDATE()) AS EventTime,

CONVERT (xml, record) AS record

FROM sys.dm_os_ring_buffers

CROSS JOIN sys.dm_os_sys_info

WHERE ring_buffer_type = 'RING_BUFFER_RESOURCE_MONITOR') AS tab

ORDER BY EventTime DESC;

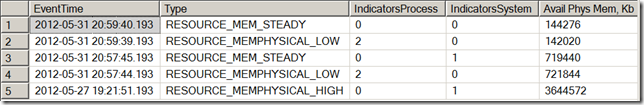

After allowing the plan cache pollution application to run for a couple of minutes I ran the above query and received the following output:

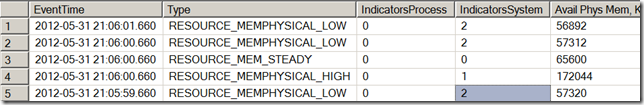

This shows that the resource monitor is generating low memory notifications into the ring buffers for process level memory pressure, so we definitely need to take this into account when looking at the ring buffer output to make decisions about whether or not SQL Server is properly configured for ‘max server memory’. After running these tests, I switched to a different application that I wrote for our Immersion Events that does nothing but consume memory from the OS in a similar manner to how another instance of SQL Server might consume memory or a VM balloon driver might consume memory to force memory pressure in Windows to cause applications to trim their usage. When this application is run over a couple of minutes time, the following out put is received:

This shows that we indeed had external memory pressure at the time that the low memory notifications occurred in the SQLOS resource monitor. One of the questions I was asked was whether I would filter the notifications that were internal process memory pressure events out, and my answer to that question would be “Absolutely Not!!” I would only add the two columns to the output as shown in the query above. The reason for this is that a low memory notification, whether it is occurring from inside SQL Server as a process, or from the system being under memory pressure is a sign that there is a problem that we need to look into further.

12 thoughts on “Identifying External Memory Pressure with dm_os_ring_buffers and RING_BUFFER_RESOURCE_MONITOR”

This is brilliant! Thanks for all the blogs and hard work!

Hi Jon,

I ran the script on one of the system where I am having memory pressure issues, I see in output some of the records RESOURCE_MEMPHYSICAL_LOW but both indicatorProcess and IndicatorSystem are 0. Wondering what it is

Thanks.

Woot, now I’m famous! Seriously, great follow up to the prior (very informative) blog on max server memory. Ironically, the day after I contacted you this very question [of appropriate max server memory estimating] appeared in the internal DL’s and having your blog as a reference was invaluable to the discussion. Nice work, Jonathan.

Hey Farhan,

I am going to email you a different script so we can maybe see what is triggering those events.

Hey Mike,

The IndicatorsProcess means that the SQL Server instance is under internal memory pressure, so you have something internal to SQL that is triggering the Resource Monitor to log the memory pressure. That is something I would look into, to find out what is causing the internal pressure.

I’m also seeing a large number of RESOURCE_MEMPHYSICAL_LOW in which both “indicator” columns are zero, like Farhan. In my cases, it’s not obvious that the servers in question are under memory pressure.

I would, however, like to know what it means. Has anyone found the answer?

Just to confirm, if the IndicatorsProcess is >0, the memory pressure is being reported from the instance the DMV is being run on, correct? I have a multi-instance server and one instance is reporting RESOURCE_MEMPHYSICAL_LOW with IndicatorsProcess = 2.

Yes it is instance specific.

Jonathon,

Thank you for all the great blog posts. After running the script on several servers, I have also found one server producing results like Farhan Soomro’s reply above. There are RESOURCE_MEMPHYSICAL_LOW records with both IndicatorProcess and IndicatorSystem returning 0. I would like to understand why and if the script you shared with Farhan may have determined a cause? Thanks.

Without taking a memory dump of the process you can’t figure it out for certain, but generally from dump analysis it is related to pool level pressure and there are no indicators for this in the XML currently.

Thanks a lot, Jonathan, this is indeed a fact-displaying script to demonstrate what a proper “max server memory” is needed. These days, we can see server with 1TB memory, and your original algorithm, i.e. 1GB for OS + (16-4)/4 + (1024-16)/8= 130GB to be reserved, will make lots of people challenging whether a DBA has reserved too much memory. So this script will be good to dispute such challenges if needed.

It takes memory to map and address memory. The reserved memory from the calculation is accounting for the 7% commit overhead of kernel Page Table Entries required to map and address the memory being consumed by the process. As my other blog post points out, it is absolutely going to result in some Available Free memory being left on the table for tuning the initial value upwards, but it is ALWAYS easier to see Available Free memory trending over time and make an educated adjustment higher for max server memory than it is to discover that you have set it too high and SQLOS is constantly making memory adjustments based on signals from the OS that memory pressure exists. The problem with any “fixed” algorithm for determining where to set max server memory is that on small memory systems they might be too high resulting in memory pressure, because of the initial memory requirements of the OS and other processes consume a larger ratio of the total memory, and on large memory systems they will likely too low leaving available memory, because of the initial memory requirements of the OS and other processes consumes a much lower ratio of the total memory. This is why the math is reserving 25% of the first 16GB vs only 12.5% of the memory above the 16GB. It could easily be 10% for the memory above the 16GB but I would rather remain conservative initially and the math is always prettier when it is divide by 8GB. LOL!