You may have heard of Intel Optane Technology, but perhaps you aren’t quite sure what that term actually refers to, and whether it is relevant for SQL Server. Unfortunately, Intel Optane is an overloaded marketing term that covers several different product categories and specific products. Intel also has Optane product offerings for the consumer market, which further confuses the issue.

All of these different products use 3D-XPoint (pronounced 3D Cross Point) technology in different ways for different purposes. First, we have their consumer products.

Consumer Products

Intel Optane Memory Series (Stony Beach, Q1 2017)

Intel Optane Memory M10 Series (Stony Beach, Q1 2018)

Intel Optane Memory H10 with Solid State Storage (Teton Glacier, Q2, 2019)

Intel Optane SSD 800P Series (Brighton Beach, Q1 2018)

Intel Optane SSD 900P Series (Mansion Beach, Q4 2017)

Intel Optane SSD 905P Series (Mansion Beach, Q3 2018)

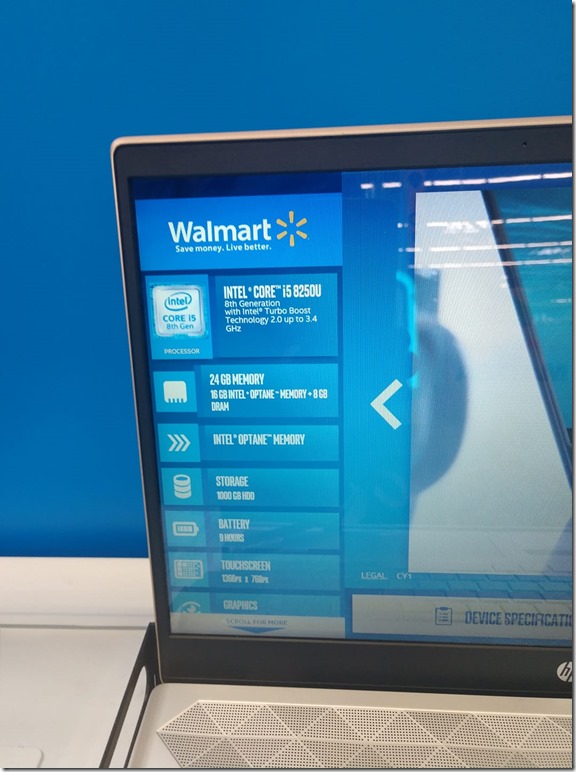

Their consumer products include system accelerators that are a cache layer in front of a magnetic HDD or slow SATA NAND SSD. These include the Intel Optane Memory and Intel Optane Memory M10 Series of products. These are useful for their intended purpose, but some systems vendors are making dubious marketing claims about them. You will see new systems that claim to have 24GB of “Memory” that turns out to actually be 16GB of Intel Optane Memory and 8GB of DDR4 DRAM. This is confusing to a typical consumer, and somewhat deceptive in my opinion. Figure 1 shows an example of this.

Figure 1: New Laptop with 24GB of “Memory”

The Intel Optane Memory H10 with Solid State Storage series are hybrid storage M.2 2280 devices that combine Optane SSD storage as a cache in front of QLC NAND SSD storage on a single M.2 2280 card. They have 256GB, 512GB, or 1TB of usable capacity for storage. These should give close to Optane SSD storage performance for less intense workloads at a lower cost than a 100% Optane SSD.

There are also pure Optane SSD storage offerings such as the 800P, 900P, and 905P that give the best storage performance from the consumer line. I have a couple of Intel Optane 900P PCIe NVMe storage cards in two of my personal desktop systems, and I have been very impressed with them over the past 18 months. Both the 900P and newer, faster 905P series products are a great choice for an OS drive for a developer or DBA desktop workstation. They also work very well in gaming rigs.

Data Center Products

Intel also has a number of different data center product lines under the Optane umbrella.

Intel Optane SSD DC P4800X Series (Cold Stream, Q4 2017)

Intel Optane SSD DC P4801X Series (Cold Stream, Q1 2019)

Intel Optane SSD DC D4800X Series (Q2 2019)

Intel Optane DC Persistent Memory (Apache Pass, Q2 2019)

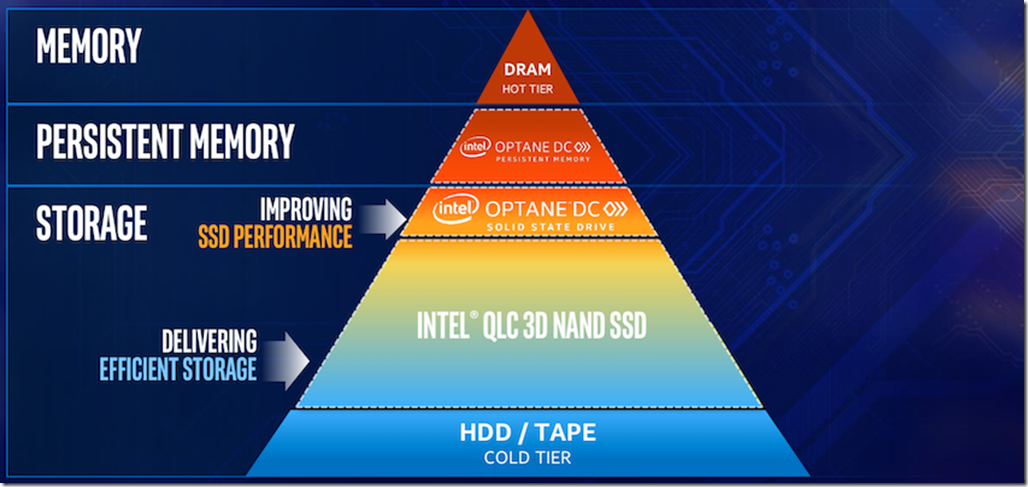

Intel has a pyramid diagram that they like to show to explain where these data center products fit in the modern data access hierarchy.

Figure 2: Intel Data Access Pyramid

Here are some more details about Intel’s Optane Data Center products.

Intel Optane SSD DC P4800X Series

These are extremely high performance block storage devices that include 375GB, 750GB, and 1.5TB capacities. They are available in HHHL AIC and U.2 15mm form factors. They all have a PCIe 3.0 x4 interface and use the NVMe protocol. Most existing servers will be able to use these in the HHHL AIC form factor in an available PCIe 3.0 x4 expansion slot. It is common to use two of these cards in a Storage Spaces RAID 1 array for redundancy. They are also well-suited for AG nodes.

These can be used with any version of SQL Server and any relatively recent version of Windows Server. You will want to make sure that you use the Intel Datacenter NVMe driver rather than the generic Microsoft NVMe driver with these drives.

Once you have a couple of these cards, you can use them for pretty much anything you want for SQL Server usage. For example, you can have your tempdb files here, or perhaps your transaction log files. I have had some clients simply move all of their data, log, and tempdb files to Intel Optane SSD DC P4800X arrays. These cards currently run about $4.00-5.00/GB, which is more expensive than most enterprise NAND flash storage, but not outrageously so.

They offer excellent random read and write performance at low queue depths, extremely low latency, predictable and steady performance under load, along with greater write endurance than NAND-based flash. They also do not lose any performance as they get full. Here are some articles and reviews of these drives:

Using Intel Optane Storage for SQL Server

The Intel Optane SSD DC P4800X (375GB) Review: Testing 3D XPoint Performance

Intel Optane SSD DC P4801X Series

These are PCIe NVMe M.2 22110 (110mm) cards that range from 100GB to 375GB in capacity. They have the same technology and main specifications as the larger form factor Intel Optane SSD DC P4800X Series cards. Not as many existing servers have PCIe M.2 slots, but an increasing number of new servers do have PCIe M.2 slots. As long as your server supports this form factor, you can use them the same way you would as the Intel Optane SSD DC P4800X Series cards. You can also get M.2 to PCIe expansion slot adapters that will let you use these M.2 cards in older servers.

Intel Optane SSD DC D4800X Series

These are Optane SSD drives that have dual-port controllers for better redundancy. These were just announced at the Intel Data-Centric Innovation Day on April 2, 2019. So far, they are not in the Intel ARK database, and they don’t appear to be readily available yet.

Intel Optane DC Persistent Memory

This is the product line that is newer and less familiar to many people. These are persistent memory modules that also use 3D-XPoint technology. They fit in DDR4 memory slots on servers with selected 2nd Generation Intel Xeon Scalable Processors (Cascade Lake-SP). They are available in 128GB, 256GB, and 512GB capacities. If you have the requisite processor and supported operating system or hypervisor, you can use Optane DC PM modules in a system along with conventional DDR4 DRAM modules. You can have up to six persistent memory modules per processor, but you have to have at least one DRAM module per processor.

You can use Intel Optane DC Persistent Memory in one of three modes. These are Memory Mode, APP Direct mode, and Storage over APP Direct mode.

Memory Mode

Memory Mode is when you use Intel Optane DC Persistent Memory Modules to increase the total size of your memory by using the larger capacity Intel Optane DC Persistent Memory Modules in place of some of your DDR4 DRAM DIMMs. You use some (up to half) of your RAM slots to hold Intel Optane PMEM DIMMs. You put regular DDR4-2933 DRAM in your other memory slots, which is then invisible to the OS. The Intel Optane PMEM is less expensive per GB compared to 128GB DDR4-2933 DIMMs, and it is available in higher capacities than you can get with DDR4 DRAM.

In this mode, the DDR4 DRAM is “near memory” which is used as a write-back cache. The Optane PMEM is the “far memory”, which actually shows up as the amount of memory visible to the operating system. The ratio of the near/far memory can vary. A common recommendation from Intel is a 4:1 capacity ratio. So for example, you could have six 128GB PMEM modules and six 32GB DDR4 DRAM modules per socket, which would give you 768GB of capacity from the PMEM, with 192GB of DRAM cache in front of it.

No application changes are required to use Memory mode. In this mode, the PMEM is volatile, which means that the data is cleared when you cycle power (just like DRAM).

App Direct Mode

In App Direct Mode, a PMEM-aware application is required. This mode adds a new tier between Memory Mode and block mode storage. It is byte addressable just like memory. With SQL Server 2019 on Linux, you can host any or all of your database files on DAX volumes that are built on Intel Optane DC PMEM modules with App Direct mode. You can also use the new Hybrid Buffer Pool feature in SQL Server 2019 with App Direct mode.

Storage over App Direct Mode

Storage over App Direct mode uses block mode storage using traditional read/write instructions that work with existing file systems. You must have an NVDIMM driver for this mode to be supported. This will have higher latency than App Direct mode, but it doesn’t require any application changes. This means that legacy versions of SQL Server should be able to use this mode.

Hybrid Buffer Pool

SQL Server 2019 CTP 2.1 introduced a new feature called Hybrid Buffer Pool. This feature allows the database engine to directly access data pages in database data files that are stored on PMEM DAX volume devices using APP Direct Mode.

In a traditional system without persistent memory, SQL Server caches data pages in the DRAM buffer pool. With Hybrid Buffer Pool, SQL Server skips performing a copy of the page into the DRAM-based portion of the buffer pool, and instead references the page directly on the database file that lives on a PMEM DAX volume device.

Access to data files in PMEM for Hybrid Buffer Pool is performed using memory-mapped I/O, also known as enlightenment. This brings performance benefits from avoiding the copy of the page to DRAM, and from skipping the I/O stack of the operating system to access the page on the persistent memory storage volume. Only clean pages can be referenced directly on a PMEM device. When a page becomes dirty it is kept in DRAM, and then eventually written back to the PMEM device after it has been flushed to persistent storage.

Microsoft recommends that you use the largest allocation unit size available for NTFS (2MB in Windows Server 2019) when formatting your PMEM device on Windows and ensure the device has been enabled for DAX (Direct Access). This feature is available on both SQL Server 2019 on Windows and SQL Server 2019 on Linux. With SQL Server 2019 CTP 2.1, you need to enable startup trace flag 809 to enable this feature.

Optane Issues

If you use any Intel Optane DC Persistent Memory Modules in your system (in any of the three modes), they run at 2666 MHz, and your regular DDR4-2933 DRAM will also run at the slower 2666 MHz speed. Intel Optane DC PMEM performs better for reads than for writes. Sequential read latency is about 170ns while sequential write latency is about 320ns. Sequential read bandwidth is about 7.6 GB/sec per DIMM, while sequential write bandwidth is only about 2.3 GB/sec per DIMM. These figures are all significantly worse than modern DDR4-2933 DRAM. Intel Optane DC PMEM in Memory mode is faster than anything else that is lower in the data retrieval pyramid, but it simply does not compare to modern DRAM performance.

Intel Optane DC PMEM is less expensive per GB compared to 128GB DDR4 DRAM modules, but not compared to lower capacity 32GB DDR4 DRAM modules. The price per GB of Intel Optane DC PMEM goes up as the capacity increases, but not as steeply as with the highest capacity DRAM modules.

Here are some relevant articles about Intel Optane products.

Intel Optane DC Persistent Memory Module (PMM)

Supermicro SuperServer with Intel Optane DC Persistent Memory First Look Review

Pricing of Intel’s Optane DC Persistent Memory Modules Leaks: From $6.57 Per GB

Intel Optane DIMM Pricing: $695 for 128GB, $2595 for 256GB, $7816 for 512GB (Update)

Intel® Optane™ DC Persistent Memory Operating Modes Explained

Intel Optane and SQL Server

After all of this, where are we with Intel Optane regarding SQL Server usage? This will depend on the Intel Optane product, your workload, and your current/desired operating environment.

Intel Optane SSD P4800X

I am a big fan of the Intel Optane SSD P4800X series of drives for on-premises SQL Server usage. They just work, on any version of SQL Server on any recent operating system on any server with PCIe 3.0 support. They don’t require 2nd Generation Intel Xeon Scalable Processors. The only problem is their availability and relatively high price per GB of capacity.

Intel Optane DC Persistent Memory

Intel Optane DC Persistent Memory seems like a more mixed verdict. I think Memory mode is not going to be a good fit for most SQL Server workloads. Using the example from above, (with twelve 32GB DDR4-2933 DIMMs and twelve 128GB Optane PMEM DIMMs in a two-socket server) you would have 384GB of near memory cache in front of 1,536GB of Optane in Memory mode, all running at a 2666 MHz speed. Once your working set exceeds 384GB, you will be hitting the much slower Optane far memory. The current pricing breakdown for this configuration would be about $2,700 for twelve 32GB DDR4-2933 DIMMs and about $9,600 for twelve 128GB Intel Optane DIMMs. This would be about $12,300 total.

In most cases, you would be better off with twenty-four 32GB DDR4-2933 DIMMs in a two-socket server, running at full speed. This configuration would give you 768GB of very fast DRAM for your buffer pool. This memory would cost about $5,400 at current prices. Saving about $7,000 is nice, (but is insignificant compared to your SQL Server core licensing costs). What is more important is the likely much better performance for most workloads from having nothing but fast DRAM rather than a mixture of DRAM and PMEM in Memory mode.

One bad scenario (for SQL Server) that I hope we don’t see is heavy Memory mode usage on Virtualization hosts. Imagine a two-socket virtualization host that has twelve 512GB Optane PMEM DIMMs and twelve 32GB DDR4-2933 DRAM DIMMs. This host would have 6,144GB of PMEM capacity, with only 384GB of DRAM cache in front of it. That might be great for web-server VMs, but probably not so great for SQL Server VMs that have a significant workload.

App Direct mode is more interesting. I think that the Hybrid Buffer Pool feature may work very well (much better than the old Buffer Pool Extension feature), and I like the fact that it is available for both SQL Server 2019 on Windows and SQL Server 2019 on Linux. You should also be able to use the Persistent log buffer feature from SQL Server 2016 with App Direct mode on both Windows and Linux. SQL Server 2019 on Linux will be “fully enlightened” which means you will be able to store any type of SQL Server database file on a DAX volume that is using Optane PMEM in App Direct mode.

Storage over App Direct mode also looks very useful. It will let you use Optane PMEM as very fast block mode storage with older versions of SQL Server, older versions of Windows Server, and possibly older versions of your favorite hypervisor. All you need is an NVDIMM driver. It will still require a server with 2nd Generation Intel Xeon Scalable Processors though.