This month the SQLskills team is presenting a series of blog posts aimed at helping Accidental/Junior DBAs ‘keep the SQL Server lights on’. It’s a little taster to let you know what we cover in our Immersion Event for The Accidental/Junior DBA, which we present several times each year. You can find all the other posts in this series at https://www.sqlskills.com/help/AccidentalDBA. Enjoy!

One of the most common mistakes that I see people make as they configure a new database server is to have an improperly configured or simply inadequate storage subsystem for their intended database workload. It is not that unusual for someone to specify the components for a new server with high-end Intel processors and lots of physical RAM, but with only eight internal, 10K magnetic drives for their entire storage subsystem. This is a recipe for disaster, unless you have a very light workload. A little analogy that I like to use in presentations is when you go to a gym and see guys who only work on their upper body and don’t do any leg work. They end up looking ridiculous and are not really very strong, just as a database server will be crippled by an inadequate storage subsystem.

It is always better (from a performance perspective) to have a larger number of smaller drives in a disk array, rather than a smaller number of larger drives in a disk array. Each disk, whether it is a conventional magnetic drive or a solid state drive, has certain performance limits in terms of sequential throughput (MB/sec) and random Input/Output Operations per Second (IOPS). Having more drives in the array will increase the performance of the array, at least until you run into the limits of some other component in the storage subsystem, such as the RAID controller, HBA, or the PCI-E slot you are using.

It is far too easy to simply focus on how much disk space you need for the various types of database files, such as SQL Server data files, log files, tempdb files, and database backup files rather than concentrating on the required performance metrics for each of these types of database files. You need to consider how much sequential read and write performance you need (in terms of MB/sec), and how much random read and write performance you need (in terms of IOPS) for your workload for each of these database file types. You also need to think about your disk redundancy requirements and your available budget.

Thinking about all of this will help you and your storage administrator decide how to properly configure your disk subsystem to get the best performance and redundancy possible with your available resources. This is far preferable to simply calculating your disk space requirements, and asking for a certain amount of disk space.

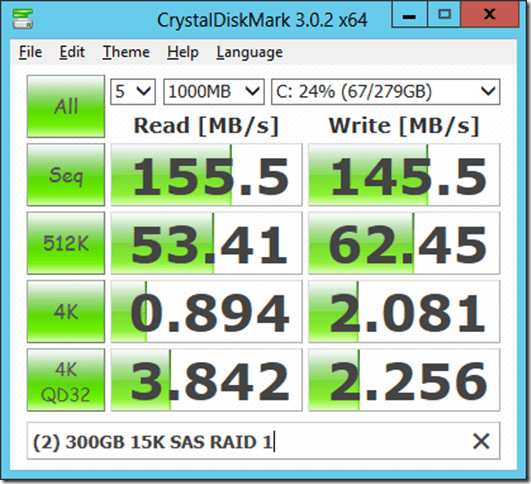

There is a free and easy to use benchmark tool that you can use to quickly compare the performance of different types of disks and disk arrays. CrystalDiskMark, which is available from Crystal Dew World is a fairly well-known disk subsystem benchmark. You can select the number of test runs, desired file size, desired test file type, and which logical drive you want to test. It allows you to measure:

- Sequential read and write performance in megabytes/second

- Random read and write performance for a 512K block size

- Random read and write performance for a 4K block size

- Random read and write performance for a 4K block size with a queue depth of 32

There are other disk benchmarks such as SQLIO that will do a much more thorough job of benchmarking your disk subsystem, but they are a little more difficult to work with. Using CrystalDiskMark should be a supplement to other disk benchmarking that you do. I like to do my first round of testing on each logical drive using CrystalDiskMark before I do more detailed, time-consuming testing with SQLIO. You should test all of your logical drives with these tools before you install SQL Server. Figure 1 shows what the results from CrystalDiskMark look like.

Figure 1: CrystalDiskMark Results for two 300GB 15K SAS drives in RAID 1

This shows you the performance characteristics for a two drive, RAID 1 array with 300GB, 15K rpm conventional magnetic drives. An array like this is commonly used for the system drive in a database server to get decent performance and to have some redundancy for the operating system and the SQL Server binaries (for a server that is not part of a fail-over cluster instance). As an accidental DBA, you need to think about your desired performance characteristics and your required redundancy levels as you decide how to lay out your physical and logical disk configuration. You have to think about the different types of database files, and how they will be used with your type of workload as you make these decisions. No matter how you decide to configure your disk subsystem, it is very important to test each logical drive with CrystalDiskMark and SQLIO before you install SQL Server, so that you don’t have any unpleasant surprises later!

Our online training (Pluralsight) courses that can help you with this topic:

5 thoughts on “The Accidental DBA (Day 2 of 30): Hardware Selection: Disk Configurations and RAID -> Performance not Capacity”

Hi Glenn,

Thanks for the explanations.

Could you explain what do you mean with (for a server that is not part of a fail-over cluster instance), has we are just installing a SQL Cluster with RAID 1 for binaries ?

Is it for the ‘decent performance’ or for the ‘redundancy’ part ?

Thank you,

Kukah

When a server is part of a fail-over cluster instance, the SQL Server binaries are usually installed on one of the cluster drives instead of the local C: drive.

Understood.

Thank you.

Glenn,

How do you deal with the SAN volumes that are presented to DBA and you don’t have any visibility in SAN settings?

Do you know of any resources that explain SAN caching, volumes, storage pools, stripe sizes, etc?

I’d like to understand SAN settings better and also be able to educate the SAN admin 🙂

Thanks,

Mark

Hi Glenn.

First of all, thanks for the post and thanks to SQLSkills team for the entire 30-day series of post. When I read about performance in blogs or books I always read that we need to think in consideration of our workload type, but I have not read much about “workload types”, I mean, do you know any blog/book/whitepaper where I can read and learn about the recommendations on the different “workload types”? For example, I guess a reporting database should be configured in a different way than a point of sale system and different than a datawarehouse, etc. So, any recommendations on that matter will be highly appreciated.

Thanks! 🙂