Last week the SQL Server Tools team released a new version of Management Studio, 18.4, and it dropped in the middle of my Query Store full day pre-con on Monday. Those two are related. There were some changes in SSMS 18.4 specific to Query Store, and for a few seconds over lunch I thought about downloading the new version and running it for my afternoon demos. But…I didn’t want to anger the demo Gods, so I stuck with 18.3.

What’s New?

The list of features and fixes for each release of SSMS can be found here, and for this post I’m interested in the features related to Query Store. Within Management Studio we’ll create a new database named QS_Settings and turn on Query Store with the default options:

/*

Of note: I NEVER recommend creating a database

with this syntax, I am being lazy and just accepting all

defaults, JUST for demo purposes

*/

USE [master];

GO

CREATE DATABASE [QS_Settings];

GO

ALTER DATABASE [QS_Settings] SET QUERY_STORE = ON;

GO

ALTER DATABASE [QS_Settings] SET QUERY_STORE (

OPERATION_MODE = READ_WRITE

);

GO

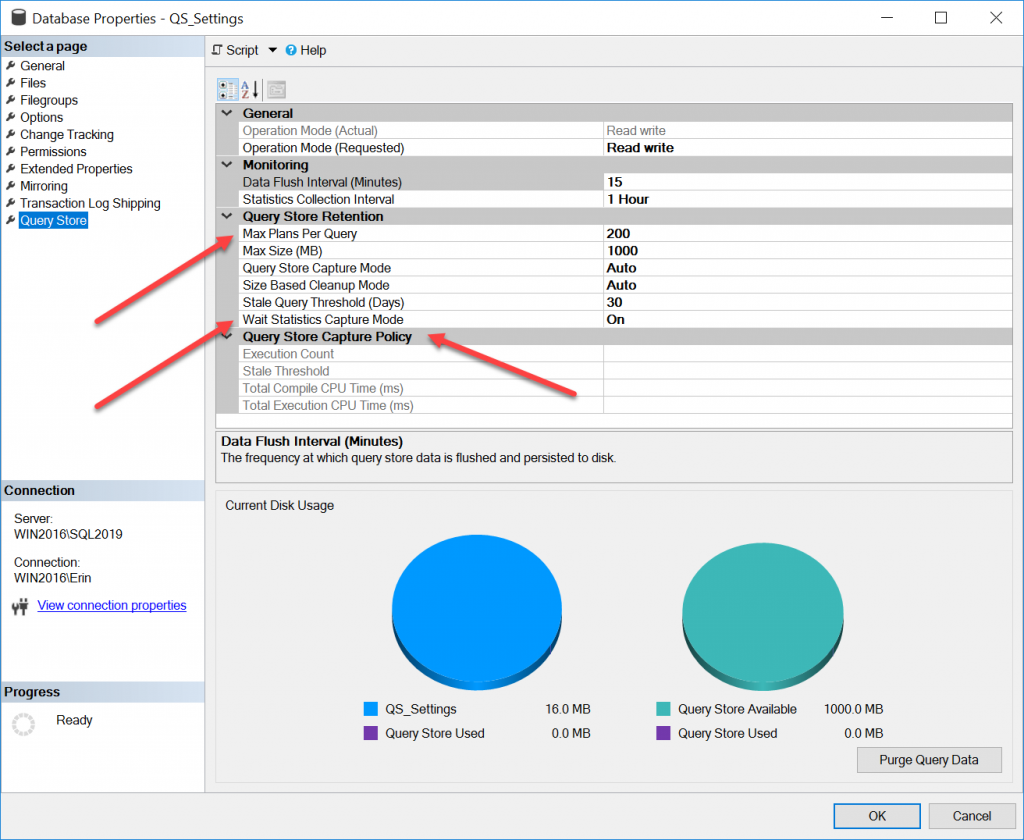

If we look at the Query Store page on the Database Properties window, you’ll see some additional entries:

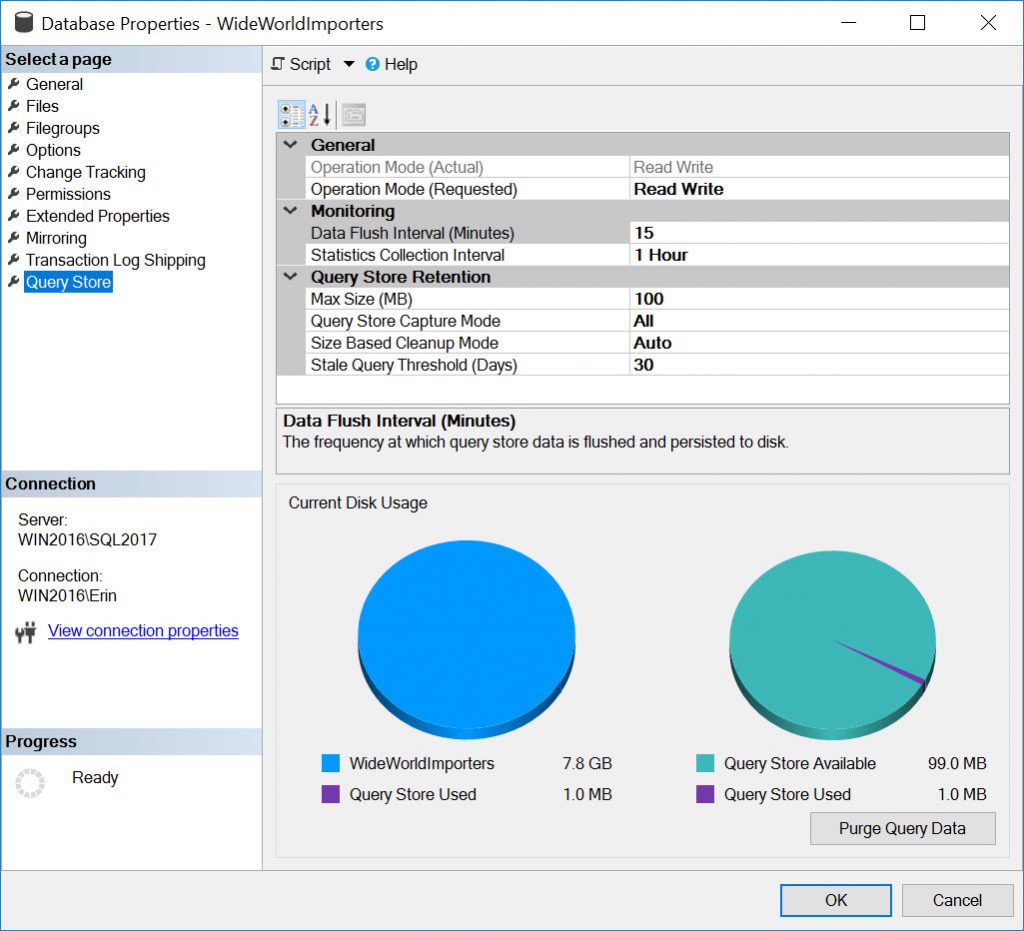

For comparison, here is a screen shot of the same window in SSMS 18.3:

There are two options that, previously, you could only modify using T-SQL:

- MAX_PLANS_PER_QUERY

- WAIT_STATS_CAPTURE_MODE

And there is an entirely new section specific to the CUSTOM value for QUERY_CAPTURE_MODE, which was added in SQL Server 2019. In addition, the default values for some settings changed in SQL Server 2019.

Setting MAX_PLANS_PER_QUERY and WAIT_STATS_CAPTURE_MODE

When you enable Query Store in any version of SQL Server, by default the value for MAX_PLANS_PER_QUERY is 200. As mentioned in my Query Store Settings post, I recommend leaving this setting at the default value. The only case where I would change it is if I find that I have queries with more than 200 plans. If you are running SSMS 18.3 or below, or Azure Data Studio (ADS), you can use:

USE [master];

GO

ALTER DATABASE [QS_Settings] SET QUERY_STORE (

MAX_PLANS_PER_QUERY = 500

);

GO

When you enable Query Store in SQL Server 2017 or 2019, WAIT_STATS_CAPTURE_MODE is enabled by default (remember that query-level wait statistics were not available in Query Store in SQL Server 2016). When you upgrade to SQL Server 2017 or 2019 from SQL Server 2016, and you have Query Store enabled, WAIT_STATS_CAPTURE_MODE is automatically enabled. If you want to turn it off for some reason, and you’re using SSMS 18.3 or below, or ADS, you can run:

USE [master];

GO

ALTER DATABASE [WideWorldImporters] SET QUERY_STORE (

WAIT_STATS_CAPTURE_MODE = OFF

)

GO

With SSMS 18.4, you now have the ability to change both options via the UI. In general, I recommend using the T-SQL because then it can go into change control, and you don’t have to worry about altering another setting by mistake.

CUSTOM Options for QUERY_CAPTURE_MODE

In SQL Server 2019 we now have the ability to customize queries that are captured based on execution count, total compile CPU time (ms), OR total execution CPU time (ms), within a defined window of time. This provides much more control than the AUTO option, which excludes queries based on internally-determined thresholds set by Microsoft, which are not documented. The CUSTOM option is a game-changer, as it allows those with ad hoc workloads to use Query Store and minimize the performance overhead.

Changing to CUSTOM

When upgrading a database from SQL Server 2016 or 2017 to SQL Server 2019, with Query Store already enabled, the value for QUERY_CAPTURE_MODE will not change. To enable and customize the options if you are running SSMS 18.3 or lower, or ADS, you can use:

USE [master];

GO

ALTER DATABASE [QS_Settings] SET QUERY_STORE (

QUERY_CAPTURE_MODE = CUSTOM,

QUERY_CAPTURE_POLICY = (

STALE_CAPTURE_POLICY_THRESHOLD = 1 HOURS,

EXECUTION_COUNT = 30,

TOTAL_COMPILE_CPU_TIME_MS = 1000,

TOTAL_EXECUTION_CPU_TIME_MS = 100)

)

GO

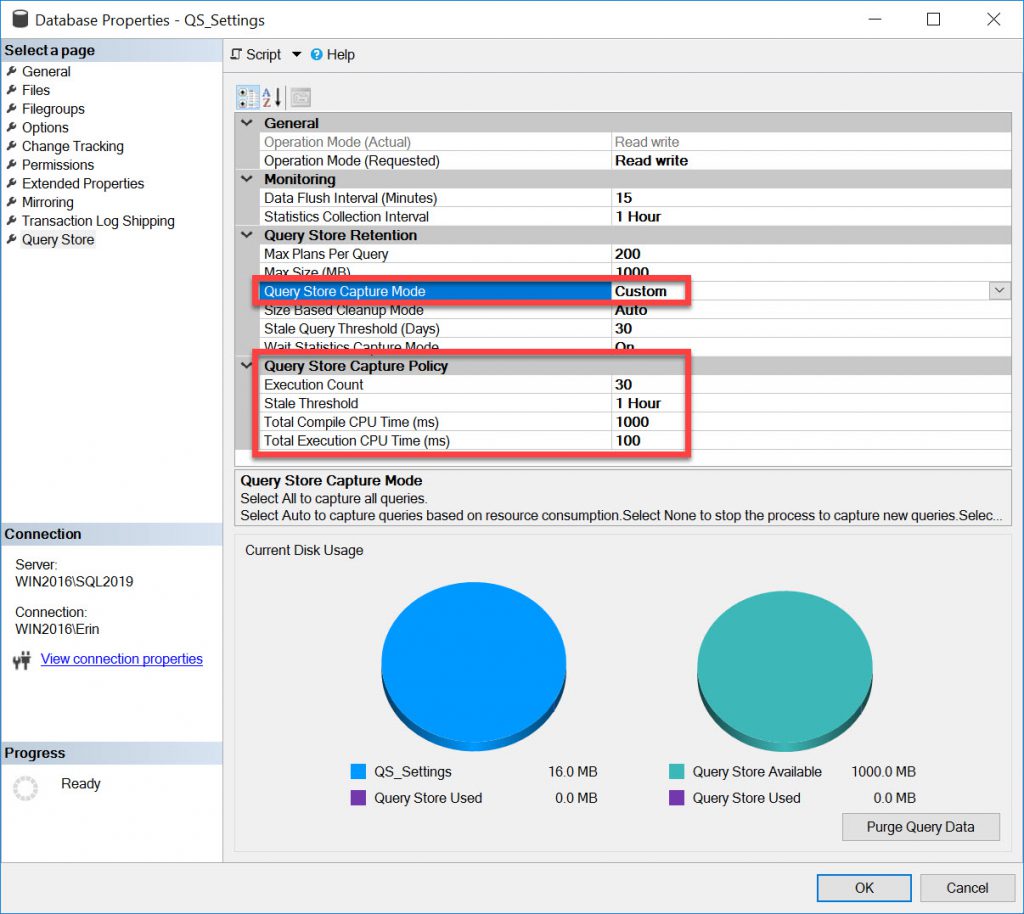

Within SSMS 18.4, when you change QUERY_CAPTURE_MODE to CUSTOM in the UI you will see:

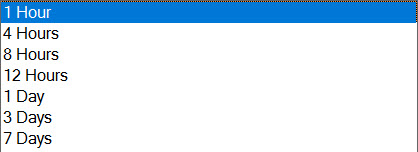

At this time, I do not have recommendations, based on data, for these options. I have one customer running SQL Server 2019, and I haven’t heard from many people who are running SQL Server 2019 and have Query Store enabled. Yet. Over time, I am hoping to get better information, but my initial suggestion is that you make a best estimate and then monitor. The default values are a good starting point. The minimum window of time (STALE_CAPTURE_POLICY_THRESHOLD) is one hour, and the default 1 day. Note that the drop-down for this option contains a list of values:

Unlike INTERVAL_LENGTH_MINUTES, these are not the only values that you can use. For example, you can set STALE_CAPTURE_POLICY_THRESHOLD to a value not in the list, such as two hours, using T-SQL:

USE [master];

GO

ALTER DATABASE [QS_Settings] SET QUERY_STORE (

QUERY_CAPTURE_MODE = CUSTOM,

QUERY_CAPTURE_POLICY = (

STALE_CAPTURE_POLICY_THRESHOLD = 2 HOURS)

);

GO

The value of 2 HOURS will then appear in the drop-down list going forward, which I find interesting, but that’s another rabbit hole to explore. Acceptable values for this option range from 1 hour to 7 days. While you can be clever and choose something like 42 hours, think about what window of time is appropriate for a business day, whether it’s an 8-hour day or a 24-hour day because you’re 24×7.

Thresholds

For EXECUTION_COUNT, the default value is 30, which is appropriate for an ad hoc workload as most of the time as queries only execute one time. Determining values for TOTAL_COMPILE_CPU_TIME_MS and TOTAL_EXECUTION_CPU_TIME_MS is a bit trickier. The defaults are 1000ms and 100ms, respectively. The settings are milliseconds, but the data in the Query Store table is stored in microseconds; remember this when you’re comparing your settings to what is in Query Store.

Think about the execution time first. Do you have a threshold where if a query requires more than X amount of CPU time, you’re concerned? Maybe 100ms is too low, and 1000ms (1 second) is more appropriate for your environment.

Compilation time is the hardest. I would not recommend setting it below 1000ms until you can look at captured data in Query Store. Understand that both compilation and CPU time are cumulative for these thresholds. If a query executions 5 times (below your EXECUTION_COUNT threshold, but takes 25ms of TOTAL_EXECUTION_CPU_TIME_MS each time, then on that fifth execution it will exceed 100ms and subsequent executions will be captured.

Summary

For anyone using SQL Server 2019, I highly recommend using SSMS 18.4, particularly if you use Query Store, or plan on using it. If you’re running SQL Server 2016 or 2017, it’s nice to see those additional settings in the UI, and in general I recommend staying current with releases for all the tools, assuming you don’t have business rules that limit or control what you can install.

17 thoughts on “Query Store Options in SSMS 18.4”

I was hoping for something that would let us block system queries from being captured. Ah well…

Bryant-

It’s possible that depending on the settings, you may be able to exclude system queries. Is there a User Voice item for the request to exclude system queries? If those are flooding your Query Store and make it difficult for you to find problematic user queries, I think that’s something the SQL Server team would want to know.

Erin

Great. Will try in the lab

Thanks for sharing Erin!

There’s a small typo in “When you upgrade to SQL Server 2019 or 2019 from SQL Server 2016”

Thanks for the catch, fixed it!

Hello Erin,

I have a problem with upgrade sql.

Thanks for sharing with us.

Thanks for the post!

I’m testing the CUSTOM capture mode with these settings

QUERY_CAPTURE_POLICY = (

STALE_CAPTURE_POLICY_THRESHOLD = 1 HOURS,

EXECUTION_COUNT = 10,

TOTAL_COMPILE_CPU_TIME_MS = 100000000,

TOTAL_EXECUTION_CPU_TIME_MS = 1000000000

)

And I still can see a simple query is captured the first time I run it.

This is SQL 2019 RTM and the database in 150 compat mode, am I missing something?

Thanks

Great information. I solve my error with sql.

Thanks for sharing with us.

thanks for well written post.

Hi Erin,

Great post and great presentation at Calgary user group last night. I have question about Query Store Capture Policy. Specifically what does Stale Threshold accomplish? Definition says “Defines the evaluation interval period to determine if a query should be captured.” My understanding is if I set this threshold to 2 hours that means from the time query store start capturing it will follow the capture policy and after 2 hours it will stop following the capture policy. Is that right? I am totally not sure what to make of it.

Hi Erin,

Great post and great presentation at Calgary user group last night. I have question about Query Store Capture Policy. Specifically what does Stale Threshold accomplish? Definition says “Defines the evaluation interval period to determine if a query should be captured.” My understanding is if I set this threshold to 2 hours that means from the time query store start capturing it will follow the capture policy and after 2 hours it will stop following the capture policy. Is that right? I am totally not sure what to make of it. Thanks.

Ameena-

The STALE_CAPTURE_POLICY_THRESHOLD option is the window of time within EXECUTION_COUNT, TOTAL_COMPILE_CPU_TIME_MS and TOTAL_EXECUTION_CPU_TIME_MS are measured.

If STALE_CAPTURE_POLICY_THRESHOLD is 2 hours, then within that two hour window (e.g. 12PM to 2PM), Query Store is monitoring the counts, compile cpu and execution cpu for each query to see if those thresholds are exceeded within that 2 hour window. If so, then the, query is captured in QS. At the end of the window, everything resets. So if a query is not captured between 12PM and 2PM because none of those thresholds are exceeded, it could be captured between 2PM and 4PM if any threshold is exceeded.

Hope that helps.

Erin

Thank you. It helped.

Great post and great presentation.

I solve my error with sql.

Thanks for sharing with us.

Erin,

for the max_plans_per_query setting which is defaulted as 200, say only 10% of the queries running in our system have 300 plans for example, should I adjust the max to 300 to accommodate that 10% of queries. On the other hand, say i keep the default at 200, for that 10% queries, would querystore just keep the latest 200 plans, and what is the downside of it? I am trying to determine if there is any implication of increasing the max_plans_per query to a higher and higher value, if there is, I need to drop a line at some point for the max value and troubleshoot that query.

Wing

Wing-

If you want to capture all possible plans, then you would need to increase the value for max_plans_per_query. The only implication is that more plans require more space, so it will affect the size of your Query Store.

Erin