Last week on SQLPerformance.com I blogged about ad hoc workloads and how they impact performance, noting plan cache bloat, and I had a comment asking about SQL Server plan cache limits. Kimberly mentions them in her classic post Plan cache and optimizing for adhoc workloads, but I thought I’d put the information into a blog post because I always have trouble finding the original references: Plan Caching in SQL Server 2008 (which is still accurate for SQL Server 2019, as far as I know) and Plan Cache Internals.

There are two limitations related to the plan cache:

- Number of entries

- Total size in MB (based on server memory)

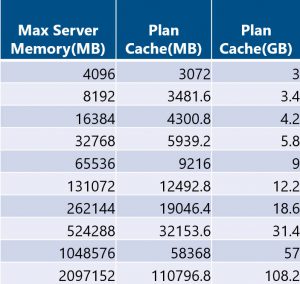

By default, the plan cache is limited to 160,036 total entries (40,009 entries per bucket), and size based on max server memory (for SQL Server 2008+ and SQL Server 2005 SP2):

75% of visible target memory from 0 to 4GB

+ 10% of visible target memory from 4GB to 64GB

+ 5% of visible target memory > 64GB

Here’s the math:

If you have an ad hoc workload, you’ll often hit the max number of entries before you hit the space limit, particularly if you have the optimize for ad hoc workloads server option enabled, which stores the plan stub for an ad hoc query on initial execution, rather than the entire plan (the plan stub consumes less space, so this is an attempt to reduce bloat).

For pure ad hoc workloads, if you’re seeing SOS_CACHESTORE spinlock contention, you can use trace flag 174 to increase the number of entries to 160,001 per bucket (640,004 total), which is applicable for:

- SQL Server 2012 SP1 CU14+

- SQL Server 2012 SP2 CU5+

- SQL Server 2014 CU2+

- SQL Server 2014 SP1 CU1+

- SQL Server 2016+

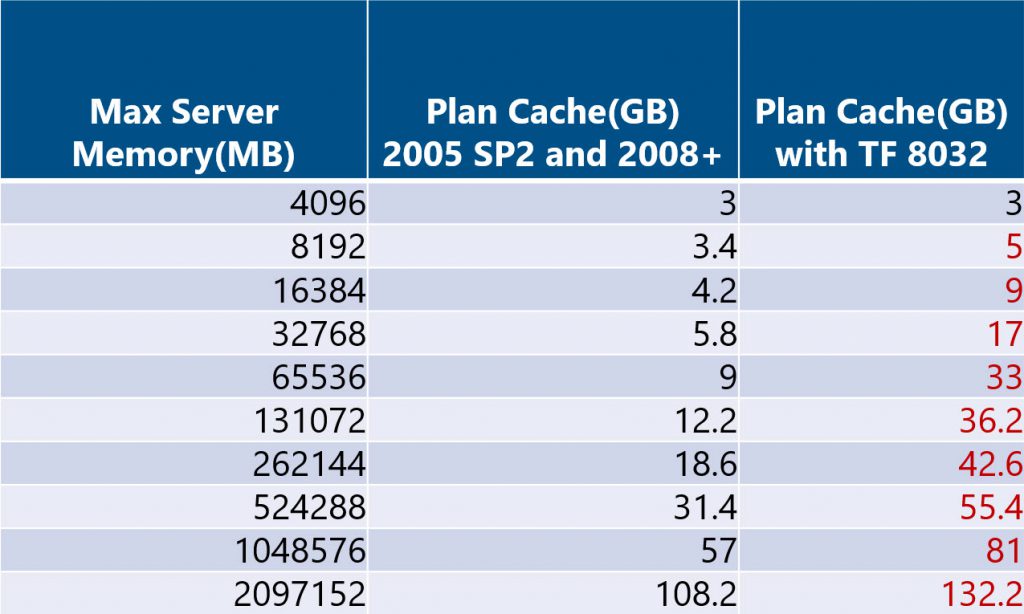

Very rarely, I have seen instances where customers have used trace flag 8032 to increase the total size of the plan cache. This trace flag uses the plan cache limits for SQL Server 2005 RTM:

75% of visible target memory from 0 to 4GB + 50% of visible target memory from 4GB to 64GB + 25% of visible target memory > 64GB

The math is below, but note that this can significantly affect the memory available for the buffer pool and this is something I don’t typically recommend…use with caution.

Summary

If you’re seeing plan cache bloat due to an ad hoc workload, take note of the space consumed by your plan cache and consider enabling the optimize for ad hoc setting, and possibly enabling TF 174. Both of those are short term fixes – ideally you look to parameterize stable queries that are executed most frequently to reduce the number of entries in the plan cache.

15 thoughts on “SQL Server Plan Cache Limits”

Hi, Erin. thanks for the info. in my SQL2014 server with 1 TB memory, I have over 82,000 plans in cache, and don’t have the trace flags that you mentioned enabled. Any chance that the max count is adjusted as memory increases as well? I used the following query to get my counts:

SELECT ‘@RETURN_PLAN_CACHE_SUMMARY’ AS OUTPUT_TYPE

, RP.name AS RESOURCE_POOL

, cacheobjtype AS CACHE_TYPE

, OBJTYPE AS OBJECT_TYPE

, CASE WHEN USECOUNTS = 1 THEN ‘SINGLE USE’ ELSE ‘MULTI USE’ END AS PLAN_REUSE

, SUM(CAST(USECOUNTS AS bigint)) AS USE_COUNT

, COUNT_BIG(*) AS PLAN_COUNT

, CAST(SUM(CAST(USECOUNTS AS bigint)) * 1.0/COUNT_BIG(*) AS DECIMAL(38, 2)) AS AVG_USES_PER_PLAN

, CAST(SUM(SIZE_IN_BYTES* 1.0/1048576) AS DECIMAL(38, 2)) AS TOTAL_PLAN_MB

FROM sys.dm_exec_cached_plans CP

JOIN sys.resource_governor_resource_pools RP ON RP.pool_id = CP.pool_id

WHERE cacheobjtype like ‘COMPILED PLAN%’

GROUP BY cacheobjtype

, OBJTYPE

, CASE WHEN USECOUNTS = 1 THEN ‘SINGLE USE’ ELSE ‘MULTI USE’ END

, RP.NAME

ORDER BY OBJECT_TYPE, PLAN_REUSE, RP.NAME

John,

Good catch! I didn’t specify that it is 40,009 per bucket, and there are different counters (SQL Plans, Object Plans, Bound Trees, Extended SPs). The total number of entries possible is 160,036, so you are within that limit, and I assume if you break it out by counter, you should have less than 40K for each. I updated the post to clarify that, thanks!

Erin

Hi Erin,

My situation has many small databases on a server with 400GB RAM, yet plan size hovers around 10 gigabytes. PLE is > 3000 and machine has 50GB free RAM for operating system.

I tested on SQL 2017 (RTM-CU17) whilst investigating a rather severe plan eviction problem, with a 64GB RAM desktop with max memory set to 50GB, I could not get > 4270MB allocated to Adhoc Compiled Plans.

From your table I should get between 5939 and 9216MB. Do you have any advice as to why this is the case?

I then used TF8032 to get 9376MB (and 150,000 plans as per my test).

create database pollution;

use pollution;

create table Poll (ution varchar(80), Another varchar(80), AndAnother datetime)

declare @Loop int = 0;

while @Loop < 150000 BEGIN

declare @cmd nvarchar(1000) = 'declare @i varchar(80) = (select count(distinct Another) from dbo.poll where substring(ution, 1, 3) = ''' + cast(@Loop as varchar(6)) + ''' OR ution = ''B'' AND ution like ''%@#!#%'')'

exec sp_executesql @cmd;

set @loop +=1;

end

select cacheobjtype,objtype, count(*), sum(cast(size_in_bytes as bigint)) /1024/1024 MB from sys.dm_Exec_cached_Plans

cross apply sys.dm_Exec_sql_Text(plan_handle)

group by cacheobjtype,objtype

Brett-

Belated reply here, sorry about that. The cache size is either number of plans or GB size, so if you’re hitting the max number of plans but it’s only 4GB, then it would be because you maxed out the number of plan cache entries. Or am I misunderstanding what you’re seeing?

Erin

Hi Erin,

I can’t agree with you regarding total number of entries in Plan Cache. Why?

I have a situation where number of SQL Plan entries reach limit of 160,036 (this is without Object Plan), and this is because of huge number of AdHoc queries. Pay attention on result from sys.dm_exec_cached_plans:

– Number of AdHoc queries is ~150.000

– Number of Prepared statements is ~10.000

– Number of Proc is ~2.000

– Total number of SQL Plans (AdHoc + Prepared) = ~160,036 (CONSTANTLY between 160,016 and 160,042)!!!

– Total number of SQL Plans (AdHoc + Prepared) + Object Plans (only Proc) is ~162,000 (CONSTANTLY over 162,000) !!!

Because of that, every time when number of SQL Plans reach 160,036, we have memory pressure (few times per day) and one of consequences is decreasing number of Procedures from Object Plans. And this is really not a good solution… that huge number of AdHoc queries has impact on number of Procedures, especially when we have 1TB RAM on server (570GB dedicated for SQL Server), where total size of cache is 22GB and it isn’t limitation based on math.

After 3 month of conversation with Microsoft Support, they finally wrote that each portion of cache, SQL Plans and Object Plans have its own limit of the 160,036. Whole that story isn’t well documented, and because of that we have wrong information.

And I want to add, that internal memory pressure investigation is suffering, because:

– you can find that you have a pressure,

– you can find what is consequence of memory pressure (e.g. decreasing number of proc in cache),

– you can find memory notifications about pressure (with XE),

– BUT, you can’t find exactly which memory clerk sent those notification and you can’t prove what is the root cause of pressure. You can guess, but you can’t prove. This is because you can prove with any method, and this is because it is not well documented.

And, this is not only one “strange” case regarding memory pressure.

Hi-

To make sure I understand…

You disagree with the number of entries in the Plan Cache because you are seeing 162,000+ entries in your TOTAL plan cache (with max server memory set to 570GB). So your total number of 162,000 is a little bit higher than 160,000, which is what is referenced in the Microsoft documentation.

Is that what you are saying?

Erin

Yes, exactly. I do not agree with Microsoft documentation because they wrote, I quote them: “Therefore, the maximum number of entries that can fit inside the SQL Server plan cache is 160,036.” This is not a true, and I have a proof. Please, look at this (I executed those 8 queries in the same time):

select count(*) from sys.dm_exec_cached_plans –164,600

select count(*) from sys.dm_exec_cached_plans where objtype in (‘adhoc’,’prepared’,’proc’) –162,359

select count(*) from sys.dm_exec_cached_plans where objtype in (‘adhoc’,’prepared’) –160,032

select count(*) from sys.dm_exec_cached_plans where objtype in (‘adhoc’) –147,979

select count(*) from sys.dm_exec_cached_plans where objtype in (‘prepared’) –12,053

select count(*) from sys.dm_exec_cached_plans where objtype in (‘proc’) –2,327

select sum(entries_count) from sys.dm_os_memory_cache_counters where name IN ( ‘SQL Plans’) –160,034 (SQL PLans = ~ (adhoc + prepared)), this is very close to the limit

select sum(entries_count) from sys.dm_os_memory_cache_counters where name IN (‘Object Plans’) –2,333 (Object Plans = ~ proc)

Finally, after few months of conversation with them, one of their engineers spent some time to find (probably in code) that “SQL PLans” as part of Plan Cache has its own limit of the 160,036 entries, and that “Object Plans” as separate part of the Plan Cache has its own limit of the 160,036 entries.

I have more interesting details, but this is maybe not the place to spread that story.

Ok, then you have some inside information which I don’t have first hand knowledge of. Thanks for sharing!

You’re welcome.

Hello Erin,

I haven’t clear the difference between plan cache, data cache and Buffer Pool.

I start from these definitions:

Data cache: select * from sys.dm_os_buffer_descriptors

Plan cache: select * from sys.dm_exec_cached_plans

My questions:

– Is Plan cache a part (included) of buffer pool?

– Does Data cache coincide with buffer pool?

– If I run DBCC DROPCLEANBUFFERS I clean data cache, but not the plan cache. Why?

Thanks very much

Alessandro

SQL Server has multiple memory clerks which allocate memory. One is SQLBUFFERPOOL, which is the buffer pool you mention. Another is SQLQUERYPLAN, which is the plan cache. They are different entities within SQL Server, so no, the plan cache is not part of the buffer pool. When you say “data cache”, if you mean data and index pages, then that IS what’s IN the buffer pool (data and index pages that have been requested). DBCC DROPCLEANBUFFERS only drops clean pages from the buffer pool. If you want to clear the plan cache (not recommended in a production environment), you run DBCC FREEPROCCACHE.

Hi Erin, is there any tradeoff regarding the trace flag 174? Have you seen any case where you enabled it and has caused any side effects? Especially considering environments with a large amount of memory, 2TB+ for example?

I have not seen a scenario where TF 174 has had a negative impact, but I’ve only seen it enabled in a handful of environments. Thus, your mileage may vary.

Hi Erin,

We have enabled Trace flag 174 and Trace flag 8032 on SQL 2016 environment as per microsoft support suggestion. We are now moving to SQL2019+ CU9.Do you recommend these trace flags enabled on SQL2019 as well?. Please let me know

Kevin-

Unfortunately, I don’t have enough info about your environment or your workload to feel comfortable making a recommendation one way or the other.

Erin