During the PASS Summit in November I presented a session on Demystifying Database Statistics (if you attended Summit but missed it, you can stream it from here). During the session I went through a demo that showed the automatic update of a statistic. The database had the Auto Update Statistics option enabled, and I had added almost 25,000 rows to a table with 121,000 rows. The number of rows added via the bulk import was just over the limit to trigger an auto-update of statistics for the next query, which I showed. I had many people ask why statistics did not update immediately after the bulk load of the 25,000 rows completed, so I want to step through the demo that I did at Summit to clarify when an automatic update will occur.

The Setup

Start with a copy of the AdventureWorks2012 database, which you can download from CodePlex. Ensure that the Auto Update Statistics option is enabled:

IF (SELECT COUNT(*) FROM [sys].[databases] WHERE [name] = 'AdventureWorks2012' AND [is_auto_create_stats_on] = 0) = 0 BEGIN ALTER DATABASE [AdventureWorks2012] SET AUTO_UPDATE_STATISTICS ON END;

We’re going to use a copy of the Sales.SalesOrderDetail table for the demo. After we create the table, we will check to see when statistics last updated. We can use various methods to check statistics date, such as DBCC SHOW_STATISTICS or STATS_DATE, but since the release of SP1 for SQL Server 2012, I have exclusively used sys.dm_db_stats_properties to get this information.

USE [AdventureWorks2012]; GO SELECT * INTO [Sales].[TestSalesOrderDetail] FROM [Sales].[SalesOrderDetail]; GO CREATE CLUSTERED INDEX [PK_SalesOrderDetail_SalesOrderID_SalesOrderDetailID] ON [Sales].[TestSalesOrderDetail] ([SalesOrderID], [SalesOrderDetailID]); GO CREATE UNIQUE NONCLUSTERED INDEX [AK_TestSalesOrderDetail_rowguid] ON [Sales].[TestSalesOrderDetail] ([rowguid]); GO CREATE NONCLUSTERED INDEX [IX_TestSalesOrderDetail_ProductID] ON [Sales].[TestSalesOrderDetail] ([ProductID]); GO SELECT OBJECT_NAME([sp].[object_id]) AS "Table", [sp].[stats_id] AS "Statistic ID", [s].[name] AS "Statistic", [sp].[last_updated] AS "Last Updated", [sp].[rows], [sp].[rows_sampled], [sp].[unfiltered_rows], [sp].[modification_counter] AS "Modifications" FROM [sys].[stats] AS [s] OUTER APPLY sys.dm_db_stats_properties ([s].[object_id],[s].[stats_id]) AS [sp] WHERE [s].[object_id] = OBJECT_ID(N'Sales.TestSalesOrderDetail');

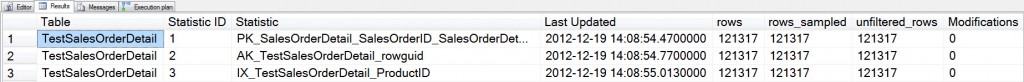

Here’s the output:

This is my go-to DMV for statistics because in addition to including the date that statistics were last updated and row information, I also get a count of modifications since the last statistic update. I just created the table, so the Last Updated date is current, and I have not made any changes so the modification count is 0.

Invalidating Statistics

For my demo I want to bulk insert enough rows to invalidate the statistics. SQL Server has pre-determined thresholds where it considers statistics to be out-of-date and therefore invalid. The technical article, Statistics Used by the Query Optimizer in Microsoft SQL Server 2008, documents these thresholds. For a regular table, statistics are out of date when:

o The table size has gone from 0 to >0 rows (test 1).

o The number of rows in the table when the statistics were gathered was 500 or less, and the colmodctr of the leading column of the statistics object has changed by more than 500 since then (test 2).

o The table had more than 500 rows when the statistics were gathered, and the colmodctr of the leading column of the statistics object has changed by more than 500 + 20% of the number of rows in the table when the statistics were gathered (test 3).

The Sales.SalesOrderDetail table has 121317 rows:

(121317 * 0.20) + 500 = 24764

The bulk insert below loads 24775 rows, which should be enough to invalidate statistics. Download the .txt file for the bulk insert here.

BULK INSERT AdventureWorks2012.Sales.TestSalesOrderDetail FROM 'C:\SQLStuff\Statistics\Data\sod.txt' WITH ( DATAFILETYPE = 'native', TABLOCK ); (24775 row(s) affected)

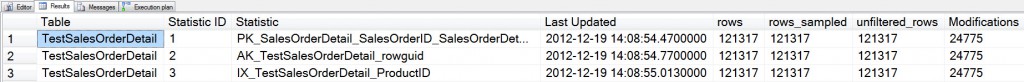

After the bulk load completes, re-run the query against sys.dm_db_stats_properties and review the output:

The statistics have not updated, but the modification counter has changed, as expected. The statistics are now out of date based on the threshold defined previously, and we would expect that a query or data modification against Sales.TestSalesOrderDetail would trigger an update of statistics. But before we try that, let’s review what causes the automatic update.

The aforementioned article states:

The statistics auto update is triggered by query optimization or by execution of a compiled plan, and it involves only a subset of the columns referred to in the query.

When a query is first compiled, if the optimizer needs a particular statistics object, and that statistics object exists, the statistics object is updated if it is out of date. When a query is executed and its plan is in the cache, the statistics the plan depends on are checked to see if they are out of date. If so, the plan is removed from the cache, and during recompilation of the query, the statistics are updated. The plan also is removed from the cache if any of the statistics it depends on have changed.

To be clear, if a query plan exists in cache and that plan uses specific statistics, when the query executes SQL Server checks to see if any of the statistics used in the plan are out of date. If they are, then the automatic update of those statistics occurs.

If a plan does not exist in cache for a query, then if the optimizer uses a statistics object that is out of date when the plan compiles, SQL Server will automatically update those statistics.

Invoking the Automatic Update

We have not run any query against Sales.TestSalesOrderDetail except our bulk insert. At the time that the query compiled for the bulk insert, no statistics for Sales.TestSalesOrderDetail were out of date; therefore no statistics required an automatic update.

Now let’s issue an update against Sales.TestSalesOrderDetail that will change the ProductID for a specific SalesOrderID, and then query sys.dm_db_stats_properties:

UPDATE Sales.TestSalesOrderDetail SET ProductID = 717 WHERE SalesOrderID = 75123; GO (3 row(s) affected)

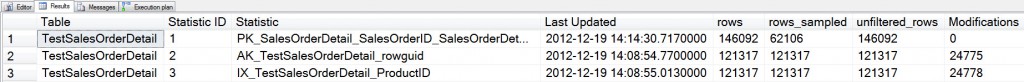

Output:

We can see that the PK_SalesOrderDetail_SalesOrderID_SalesOrderDetailID statistic object automatically updated. We could simply assume that the optimizer used this object in the plan. However, in SQL Server 2012 we can look at the plan XML and confirm this.

In his post, Statistics used in a cached query plan, Fabiano Neves Amorim describes a method to capture statistics information from the plan. Please note the following:

- This information is only available in SQL Server 2012 and higher.

- This query requires use of an undocumented trace flag. I do not recommend using this trace flag in a Production environment.

- This query interrogates the plan cache. The plan cache may be very large for your system, depending on the amount of memory on the server. Before querying the plan, I recommend setting the transaction isolation level to READ UNCOMMITTED, and also recommend using OPTION (MAXDOP 1) to limit CPU utilization. The query may take longer to execute, but it reduces the impact on other queries executing concurrently.

If we query the plan cache using Fabio’s query, we get the following information:

SET TRANSACTION ISOLATION LEVEL READ UNCOMMITTED;

GO

DBCC TRACEON (8666);

GO

WITH XMLNAMESPACES ('http://schemas.microsoft.com/sqlserver/2004/07/showplan' as p)

SELECT qt.text AS SQLCommand,

qp.query_plan,

StatsUsed.XMLCol.value('@FieldValue','NVarChar(500)') AS StatsName

FROM sys.dm_exec_cached_plans cp

CROSS APPLY sys.dm_exec_query_plan(cp.plan_handle) qp

CROSS APPLY sys.dm_exec_sql_text (cp.plan_handle) qt

CROSS APPLY query_plan.nodes('//p:Field[@FieldName="wszStatName"]') StatsUsed(XMLCol)

WHERE qt.text LIKE '%UPDATE%'

AND qt.text LIKE '%ProductID%';

GO

DBCC TRACEOFF(8666);

GO

Output:

The output confirms that the optimizer used the PK_SalesOrderDetail_SalesOrderID_SalesOrderDetailID statistic object in the query plan, and because it was out of date, SQL Server automatically updated it.

If you are running earlier versions of SQL Server, you can see what statistics the optimizer loads using the undocumented trace flags 9292 and 9204. Paul White has an excellent post, How to Find the Statistics Used to Compile an Execution Plan, which I recommend reading if you’re interested.

One thing I want to point out: the IX_TestSalesOrderDetail_ProductID statistic, which has ProductID as its key, did not update automatically when the UPDATE query executed. This is expected. Even though the statement modified ProductID for three rows (the modification counter for the statistic increased from 24775 to 24778), the optimizer did not use that statistic in the plan. If the plan does not use a statistic, the statistic will not automatically update, even if the query modifies columns in said statistic key.

Summary

As I stated initially, I wanted to write this post to clear up any confusion surrounding automatic updates for statistics. Even if a statistic becomes outdated as the result of a modification, it will not automatically update after the modification completes. The statistic will automatically update the next time a query plan uses it.

To reiterate (yes, this might be overkill), SQL Server will automatically update a statistic when:

- A query compiles for the first time, and a statistic used in the plan is out of date

- A query has an existing query plan, but a statistic in the plan is out of date

For those that attended my session at Summit, I hope this helps address any questions you might have still had. If not, please leave a comment and I will get back to you!

35 thoughts on “Understanding When Statistics Will Automatically Update”

I enabled TF 2371 today on our last SQL2008R2 SP1 instance ( hoping to apply SP2 next week ).

Great post, Erin !

Erin,

we are running SQL Server 2008 R2 Sp2 and the AUTO update Statistics feature has been enabled, but we still get instances where the statistics were not updated automatically and the performance of the system is terrible to say the least , even so far as indicating that indexes are missing, but when we look in the catalog , the indexes are there . Could it be the system is too busy to update the the statistics ? The correct Clustered indexes were also defined . We do index rebuilds twice a week and run Integrity checks every second day . The correct Clustered indexes were also defined . The server has 32 procesoors and 2 00 gig of memory . The server serves a 200 seat callcenter. Any ideas ?

Hi Lian –

Without looking at your system it’s hard to say what’s going on. Have you confirmed that the statistics (and indexes) are not hypothetical? You can check the is_hypothetical column in sys.indexes, and check the name (e.g. start with _dta_index_ or _dta_stat_). The system wouldn’t be too busy to update statistics – the updates might occur asynchronously if the Auto Update Statistics Asynchronously option is selected. How do you confirm that stats should get updated (e.g. how do you know when they should have been updated automatically but were not)?

Erin

HI Erin,

Thank you so much for this post. very well explained.

Sandip

Hi Erin,

I recently attended a webcast by you on the topic of identifying duplicate statistics, ie. single-column statistics generated by SQL prior to creation of an index with a leading key on the same column.

While I understand that these stats offer no benefit and should be dropped, I’m still not sure what other impact they might have if we were to keep them in the database. Could there be a situation where a duplicate single-column stat is picked over the corresponding index in query plan generation?

Also, how can one calculate the size footprint of these duplicate stats in the database?

Being able to get this value would strengthen the argument for dumping those.

Thank you for the very informative session and this blog post!

Marios

Hi Marios-

There *could* be a situation where the duplicate single-column statistics is chosen instead of the index statistic for a query plan, or vice versa…and the concern is if one is out of date (and therefore not representative of the data in the column) and therefore it generates a less-than-optimal query plan.

To calculate the size footprint of duplicate stats you’d need to dig into some of the internal tables. I haven’t done that, might be worth investigating!

Erin

Hi Erin,

using the information found in the new dmv sys.dm_db_stats_properties I scheduled a job every night to update statistics when the number of modifyed rows exceeds a defined percentage threshold.

I am uning a threshold of 2% for tables with a consistent ( > 10000 ) number of rows.

Is it a correct value?

Thank you for your great post!

Giacomo

Hi Giacomo-

Thanks for reading! There is no set “correct value” to use for a threshold here – it’s going to be based on your data and its distribution. For some statistics, updating after 2% of the data has changed might be optimal because of skew in the data. For other statistics (e.g. those on unique columns like an identity), I wouldn’t update that frequently – maybe after 10% change. And it would also depend on the number of rows in the table.

I hope that helps,

Erin

Great post! keep it up.

Do you recommend updating stats after restoring a backup from a 2005/2008 instance to 2012?

Dustin-

I would update statistics after restoring from a backup of a lower version. This is a particularly important when going from SQL 2000 to a higher version, as there were changes in the stats blog, but even for later versions I tend to recommend it. Sometimes it’s tricky because of the maintenance window available, but if possible, definitely work in that stats update.

Erin

Thanks, Very good information I got.

And I want know, whether schema change of a table (adding new / (deleting an existing) column) also qualifies for UPDATE STATS?

Rajendra, here you go: https://www.sqlskills.com/blogs/erin/testing-automatic-updates-statistics/

🙂

Erin

Hey Erin, I was just trying to figure out how two indexes on a table had a percent change over 1000 percent yet the statistics did not recompute via autostats. I was looking to see if the STATISTICS_NORECOMPUTE was ON (yes, I do hate double negatives) was set on the index, but that was not the case.

If you step back from the forest and give it some thought the concept makes total sense. If the threshold for an autostats is 500 + 20 percent, autostats could go into a cycle of firing at 20 percent + 500, + 501, +502, etc.

The other related db level switch, Auto Update Stats Asynchronously, by default (FALSE) runs the update stats on outdated stats at PLAN creation/procedure compile time. To avoid the time to recompute the stats (and risk a bad plan) turn this switch ON.

I’ve read the landmark article (statistics used by the optimizer) but the devil is in the details, and it is easy to overlook these nuances.

Great post… it was a huge breakthrough for me.

Hi Dave!

Great to hear from you, and I’m glad to hear that this help was helpful to you!

One thing to note, if you have a table where you have 1000% change in data, AND you have Auto Update Statistics ENABLED, then I would expect stats to update automatically, but it won’t happen until you run a query that uses that statistic. I’m clarifying because the 20% + 500 is not a rolling value, it’s fixed based on last stats update. So, if you have 10000 rows in a table, that 20% + 500 is 2500 rows. When there are 10001 rows in the table, as long as you haven’t updated stats, that update threshold doesn’t change to 2500… It’s fixed based on the number of rows in the table at the last stats update.

Let me know if that doesn’t make sense!

Erin

Hi Erin,

Great post, I found it searching for more info regarding statistics maintenance.

This week we had a performance issue with a query on our production server (SQL2012 SP1).

Reason : The statistics were not uptodate. (after updating statistics the time went from over 1 minute to 0 seconds)

Since we execute every night sp_updatestats in each database this supprised me, until I figured out that sp_update_stats does not look into the statistics of indexed views (which the query used).

So I tried to make a script of my own to update statistics if necessary.(SQL2012 and higher)

In this script I also use kind of the same logic as in auto update statistics, so unlike in sp_update_stats it does not always update statistics if only 1 record has changed. (limit maintenance time)

Can you give me feedback on my used logic/script, you may share it if it seems worthwile.

Logic :

First thing I do is retrieve all views and tables in the database and the amount of records.

I have a @Sensitive parameter : to make my check x times more sensitive than the normal check which automatic update does

I retrieve all statistics which belong to user tables or views which

– are not linked to an index (I do index maintenance afterwards, so this updates the corresponding statisics with a full scan)

and

(

– rowcount has gone from 0 to more

or

– rowcount was (500/@Sensitive)

or

– rowcount was > 500 and modificationw > ((500 + rowcount/100*20)/@Sensitive)

or

– it’s a multiple column statistic (other fields exept the leading field can be modified and will not appear in the modifications) and the last update is more than 7 days ago

or

– sys.dm_db_stats_properties returns NULL (update stats was executed but table/view was empty) and there are now records in the table

)

The script :

DECLARE @Sensitive int = 5;

WITH CTE_TableCount as

(

SELECT [object_id], ObjectSchema, ObjectName, MyCount, TableSizeInMb = MyPageCount*8/1024.0 FROM

(

SELECT ST.[object_id]

, ObjectSchema = OBJECT_SCHEMA_NAME(ST.[object_id])

,ObjectName = OBJECT_NAME(ST.[object_id])

,MyCount= SUM(ST.row_count)

,MyPageCount = SUM(ST.used_page_count)

FROM sys.dm_db_partition_stats ST

INNER JOIN sys.objects OB ON ST.[object_id] = OB.[object_id] –inner join because there are some internal tables which does not exist in sys.objects, so we exclude them by the inner join

WHERE ST.index_id 0

OR IQ.[rows] (500/@Sensitive)

OR IQ.[rows] > 500 AND modification_counter > ((500 + IQ.[rows]/100*20)/@Sensitive)

OR CASE WHEN SC.stats_id IS NOT NULL THEN 1 ELSE 0 END = 1 AND DATEDIFF(dd,IQ.last_updated, sysdatetime()) > 7 –update if multiplecolumn stats and last update is more than 1 week ago

OR IQ.[rows] IS NULL AND TC.MyCount > 0

)

Best Regards,

Franky

Thanks for the info about sp_update_stats not catching indexed views – do you have a blog? You should write a post about it! 🙂

Erin,

Looks like my script did not make it in 1 piece, so I’ll retry

DECLARE @Sensitive int = 5;

WITH CTE_TableCount as

(

SELECT [object_id], ObjectSchema, ObjectName, MyCount, TableSizeInMb = MyPageCount*8/1024.0 FROM

(

SELECT ST.[object_id]

, ObjectSchema = OBJECT_SCHEMA_NAME(ST.[object_id])

,ObjectName = OBJECT_NAME(ST.[object_id])

,MyCount= SUM(ST.row_count)

,MyPageCount = SUM(ST.used_page_count)

FROM sys.dm_db_partition_stats ST

INNER JOIN sys.objects OB ON ST.[object_id] = OB.[object_id] –inner join because there are some internal tables which does not exist in sys.objects, so we exclude them by the inner join

WHERE ST.index_id 0

OR IQ.[rows] (500/@Sensitive)

OR IQ.[rows] > 500 AND modification_counter > ((500 + IQ.[rows]/100*20)/@Sensitive)

OR CASE WHEN SC.stats_id IS NOT NULL THEN 1 ELSE 0 END = 1 AND DATEDIFF(dd,IQ.last_updated, sysdatetime()) > 7 –update if multiplecolumn stats and last update is more than 1 week ago

OR IQ.[rows] IS NULL AND TC.MyCount > 0

)

Hi, Erin.

Congratulations for this great post.

I’m working on manual update statistics and I have a doubt. There are some really big busy tables (about tens milions records) and I found some statistics with more than 25% of row change than hadn’t been updated for weeks.

So, does it mean that those statistics aren’t used in any plan, so I shouldn’t update them manually to avoid useless workload?

Does this apply also to auto created statistics?

Thank’s a lot.

Carles.

Carles-

If you have stats with more than 25% modification, and they haven’t been updated, AND you have Auto Update Statistics enabled, AND you don’t have a plan which updates them manually…then yes, I would assume that those stats haven’t been used by any plan, which is why they haven’t been updated.

If those statistics are tied to indexes, then I would start checking index usage (dm_db_index_usage_stats) to see if those indexes are being used (seeked, scanned, lookups) and trend that over time. If they’re not being used, then you could consider removing them, so you don’t have the overhead of maintaining them.

If those stats are auto-created and are not tied to indexes, then I would hope they would be used at some point, and I would probably still update them. However, I would also consider not updating them and then monitoring them over time to see if the auto-update occurs. It’s possible to have stats that get created for one ad-hoc query that gets run, and then the stat never gets used again. Tracking stats usage is a lot harder than tracking index usage.

And I’m not sure about your last question “Does this apply to auto created statistics?” Does WHAT apply to auto-created statistics?

Thanks!

Erin

Great Post Erin. I have a question on “why stats are not being updated for a query that is already cached” and hoping if you would be able to share your thoughts. DB has auto update and auto create stats.

below is the scenario

–step 1 : Load 10k rows

–step 2 :run a simple query

Select * from TestTable where name =’Microsoft’ (the plan is created and cached(simple param))

–Step 3: Add 12k rows

–Step 4:run the same query as in step 2

Select * from testTable where name =’Microsoft'( the plan created above is reused)

but the statistics are not updated as the modification counter still returns 12k. I thought, in step 4, it should update the statistics.

I am running this on SQL2008R2 SP2 and I am not sure if this any hint, I used allen’s hint and enabled Trace Flag 9292,9204 and I can see after the Step 2, the output message – Stats header and Stats loaded referenced this column but after the step 4 , I did not see any ouput message on the stats referred.So, I think the stats were not used?? I could not comprehend completely here.

Thank you.

Great Post Erin. I have a question on “why stats are not being updated for a query that is already cached” and hoping if you would be able to share your thoughts. DB has auto update and auto create stats.

below is the scenario

–step 1 : Load 10k rows

–step 2 :run a simple query

Select * from TestTable where name =’Microsoft’ (the plan is created and cached(simple param))

–Step 3: Add 12k rows

–Step 4:run the same query as in step 2

Select * from testTable where name =’Microsoft'( the plan created above is reused)

but the statistics are not updated as the modification counter still returns 12k. I thought, in step 4, it should update the statistics.

I am running this on SQL2008R2 SP2 and I am not sure if this any hint, I used allen’s hint and enabled Trace Flag 9292,9204 and I can see after the Step 2, the output message – Stats header and Stats loaded referenced this column but after the step 4 , I did not see any ouput message on the stats referred.So, I think the stats were not used?? I could not comprehend completely here.

Thank you.

Hi Sudhir-

Without trying to replicate this in the same build you’re running, with the same schema, I can’t say for certain what you’re seeing. Did you have an index on this table? If so, was it on the name column, and was the modification counter at 12K for that index’s statistic? I could try and repro, but would need the entire code.

Thanks,

Erin

Hey Erin,

This is my personal observation, obviously your vast experience may be different. I believe we are more haunted by autocreated/systemgenerated stats than user defined and also with stats that were sampled at lower sample set, most auto-created stats are sampled at lower data set, (I’ve seen values around 5% of entire rows), I have also seen some user stats misbehaving, mostly cause of sample size.

I was under the impression sp_updatestats with “Full Scan” will overcome this lower sample rate and will update even system stats using all rows, unfortunately I dont see that happening in real life, also in your example entire 146,092 were not sampled, since this table was small we were able to get 62k sampled (about 42.5%) at run time, but on larger millions of records table this will go down even more, and now this stat with lower sample rate is stuck in DB till we get further 20%+ changes in table.

Coming to point Carles Nualart raised, how will optimizer find time to update stats will full resample for very large table at query run time? (tables with millions of records), I believe it will only do a quick sample, and also auto-generated stats are an estimate not a true picture for us, we will continue to suffer, and probably only way forward is to identify and update stats with full sample?

Just yesterday I was trying to fix slow query problem in a large partitioned table, and looked at estimated plan where optimizer though this particular partition in this table has only 70.4 records, where we actually had 3 million records, and it was doing a loop join vs hash/merge, same query worked fine when it queried other partitions. (btw that was the reason I googled to find out if “Bulk” insert do more damage than making constraints not trusted and I found your wonderful article)

I have many servers where now I have a dynamic SQL script that checks last stats date and update stats older than certain days (number of days changes per product/DB based on usage usually its from 2 to 30 days ), of course this is a BIG NO NO for most servers, we have large BI presence where data is loaded between 3 to 12 weeks and also our data is not really greatly skewed, so we can probably “afford” to do this, I know there are probably better way to look mod counters or use CDC, CT to see data changed and scope this stats update.

I will try to replicate some of this scenario and send you my POC for your valued feedback.

Thanks

… so this is what happens when you get distracted many times while writing a quick response, sorry my wordings were all over, and I quickly hit “post comment” so I can get to this other discussion. I hope you understand what I was trying to say 🙂

Hi Rizwan-

So, a couple comments…

First, when you run sp_updatestats, you don’t specify the sample size. SQL Server uses the default, so if the table is less than 8MB, it will be a FULLSCAN. If it’s larger than, it’s a smaller percentage, sometimes around 10%, sometimes much lower.

You are correct that on larger tables, this sample rate gets even smaller. There is no way to change that sample size unless you explicitly specify it (which you cannot do with auto-update).

For your large tables, you can have the stats updated earlier if you enable trace flag 2371 (see http://blogs.msdn.com/b/saponsqlserver/archive/2011/09/07/changes-to-automatic-update-statistics-in-sql-server-traceflag-2371.aspx). This lowers the threshold at which stats are updated for very large tables.

You asked, “how will optimizer find time to update stats will full resample for very large table at query run time?” Well, it doesn’t “find time”, it makes time. It samples a certain number of pages and however long it takes to read those pages, it takes… Once the stat has been updated, then the query will be compiled and run. If this is something you are concerned about, you can enable the auto update statistics asynchronously option, and this will delay the update of stats until after the query has run (so it won’t interfere with performance for that query).

You stated this: “auto-generated stats are an estimate not a true picture for us” – I’m not completely clear what that means. When statistics are captured, they are a representation of data in the column. Whether it’s completely accurate about the DISTRIBUTION of data depends on many factors, so yes, it could be that they don’t represent what’s truly in the column.

I always recommend proactively updating statistics with a job. Whether you look for a percentage of rows modified or something else (e.g. date), I would prefer to update statistics manually (via a job) at a scheduled time, with the “right” sample size. Now, the majority of the time, the default sample works well. In cases where it doesn’t, then you may need to have statistics update with a FULLSCAN. I have one customer where we run a FULLSCAN for about 5 tables, when more than 5% of the data has changed. It’s just for those 5 tables. All the rest use the default sample.

I hope that helps!

Erin

Hi Erin,

How stats are updated on Alwayson secondaries means they run Update stats when we run over primary server or update <> blog column= <>

Regards

Hi Sachin-

When the transaction that has the statistics update in it (meaning, the statistics blob in the system table was updated) is shipped over to the secondary and applied, then the statistics will be updated on the secondary. It’s the same as any other transaction/modification on the primary.

Hope that helps!

Erin

Hi Erin,

a really great post with good inspiration for my own thoughts. I found a – hopefully interesting – issue with updated stats when you use different types of indexes (UNIQUE or NON UNIQUE).

Let’s have a table with a unique index on ID and a non unique index on ZIP:

CREATE TABLE dbo.Customer

(

Id INT NOT NULL IDENTITY (1, 1),

Name VARCHAR(100) NOT NULL,

Street VARCHAR(100) NOT NULL,

ZIP CHAR(5) NOT NULL,

City VARCHAR(100) NOT NULL

);

GO

CREATE UNIQUE NONCLUSTERED INDEX ix_Customer_ID ON dbo.Customer (Id);

GO

CREATE NONCLUSTERED INDEX ix_Customer_ZIP ON dbo.Customer (ZIP);

GO

Now I fill the table with app. 10,000 records

INSERT INTO dbo.Customer WITH (TABLOCK)

(Name, Street, ZIP, CIty)

SELECT ‘Customer ‘ + CAST(message_id AS VARCHAR(10)),

‘Street ‘ + CAST(severity AS VARCHAR(10)),

severity * 1000,

LEFT(text, 100)

FROM sys.messages

WHERE language_id = 1033;

GO

I run the following code to check when Microsoft SQL Server access the stats

DBCC TRACEON (3604, 9204, 9292);

GO

SELECT * FROM dbo.Customer WHERE Id = 10;

GO

SELECT * FROM dbo.Customer WHERE ZIP = ‘15000’;

GO

You might see that both queries will load the index statistics when they will be executed first. A second execution will not look for the stats (as expected).

Now I fill another 20% + 500 records into the table!

INSERT INTO dbo.Customer WITH (TABLOCK)

(Name, Street, ZIP, CIty)

SELECT TOP 3000

‘Customer ‘ + CAST(message_id AS VARCHAR(10)),

‘Street ‘ + CAST(severity AS VARCHAR(10)),

severity * 1000,

LEFT(text, 100)

FROM sys.messages

WHERE language_id = 1033;

GO

The stats will now be outdated!

SELECT COALESCE(I.name, ‘HEAP’) AS name,

rowcnt,

rowmodctr,

statVersion,

stats_date(S.object_id, S.stats_id) AS stats_datum

FROM dbo.sysindexes I INNER JOIN sys.stats AS S

ON

(

i.id = S.object_id AND

i.indid = S.stats_id

)

WHERE id = OBJECT_ID(‘dbo.Customer’);

GO

* Sorry for old school but I am working with a 2008 on this machine 🙂

Both stats have 3,000 changes but need to be updated, isn’t it?

When you run the above queries again you will see that only the stats for the NON UNIQUE index will be updated. The UNIQUE index stats leave “outdated”

I’ve tested it with SQL 2008 and SQL 2012.

Keep on writing – I love your blog posts about stats!

We encountered a strange problem running update statistics with full scan on our SQL Server 2012. After update statistics completed , the server restarted by itself with logging anything in the event log. Is this behavior expected after the statistics are done ?

That is not expected behavior. I would be careful to link the two events, and would check in the SQL Server ERRORLOG to see what’s there.

Hello Erin,

Thanks for the wonderful article.

I tried your example but I skipped the BULK IMPORT part on purpose and directly executed the UPDATE statement.Surprisingly after the update statement the stats did not auto update.Fabio’s query shows that stats on “PK_SalesOrderDetail_SalesOrderID_SalesOrderDetailID” were used and the sys.dm_db_stats_properties DMV after the update shows no of Modification rows 3 on stat “IX_TestSalesOrderDetail_ProductID” and the Last Updated column stays unchanged.

Any idea ?

Sachin-

If you did not import any new data, then the number of rows modified isn’t enough to cause an automatic update of stats when you run the update statement. The threshold of 20% + 500 has to be crossed for an update to occur, which is why I do the bulk update. Hope that helps.

Erin

Thanks Erin for the reply.

I am aware of the 500+20% rule.I think I should had framed my question better.The thing that is confusing me is that why does sys.dm_db_stats_properties show modified rows on IX_TestSalesOrderDetail_ProductID statistic object without the bulk insert and on PK_SalesOrderDetail_SalesOrderID_SalesOrderDetailID with the bulk insert though the no of rows afftected stays the same.In both the cases the Stats name returned by Fabianos query is identical which is PK_SalesOrderDetail_SalesOrderID_SalesOrderDetailID.

Also in your comments you mentioned about TF 2371.I ran this update with TF 2371.The no of effected rows were 12236 which is around 10% of the total rows

DBCC TRACEON(2371)

GO

UPDATE Sales.TestSalesOrderDetail SET ProductID = 717 WHERE SalesOrderID > 71174;

GO

SELECT ProductID FROM Sales.TestSalesOrderDetail WHERE SalesOrderID>71174

GO

DBCC TRACEOff(2371)

GO

The TF did not trigger an auto update.

Many thanks for this excellent blog.

It matches the issue I am having today almost exactly. In my instance a large ETL job was operating from ’empty image’ statistics, and we could not understand why it was that these had not been updated.

We have yet to understand how the query plans involved led to this as they should be ‘first time’ queries that cannot be cached. I suspect some hangarounds in the plan cache may be to blame!