The Query Store feature previewed in Azure SQL Database in summer 2015, was made generally available that fall, and was part of the SQL Server 2016 release the following summer. Over the past four years (has it really been that long?!) I have devoted significant time to learning Query Store – not just understanding how it works from the bottom up, but also why it works the way it does. I’ve also spent a lot of time sharing that information and helping customers understand and implement the feature, and then working with them to use the query store data to troubleshoot issues and stabilize performance. During this time I have developed a deep appreciation for the Query Store feature, as its capabilities go far beyond its original marketing. Is it perfect? No. But it’s a feature that Microsoft continues to invest in, and in this series of blog posts my aim is to help you understand why Query Store is a tool you need to leverage in your environment.

It’s all about the data

The most talked-about benefit of Query Store is its ability to help you dig into query performance and regressions, which is possible because of the data it captures. The bulk of the Query Store data resides seven system tables, exposed through views, with a total of over 150 distinct data points. Notable within that collection of information are:

- Statement text

- individual queries are captured, whether they are ad hoc or part of an object (stored procedure)

- Query Plan(s)

- the plan that was used during execution is stored in Query Store, it’s the equivalent of what you get from the plan cache

- Execution Statistics

- resource use, execution count, duration data and more are stored

- Wait Statistics

- Azure SQL Database and SQL Server 2017 and higher can capture query-level wait statistics

Query Store is a database-scoped feature – it’s not something that’s enabled for the entire instance – therefore the data is stored within the user database. To view that data you can use the built-in reports in Management Studio (the 18.x release has seven reports, earlier releases only have four), or you can write your own queries against the system views.

Viewing data

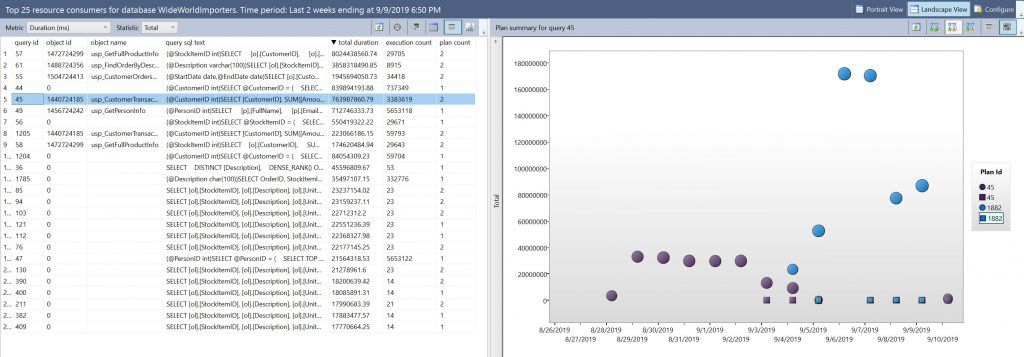

Depending on how you’ve configured Query Store, you can look at historical query information going back days or weeks. Not only can you see trends in query performance over time, but you can also compare query performance between specific windows of time. The image below shows the top 25 queries for the past two weeks based on total duration. The grid view on the left (which I prefer for analysis but is not the default) lets us see the statement text, the object to which it belongs (if it’s blank then it’s an ad hoc query), and the number of plans for the query.

The ability to view performance of the plans over time, as displayed on the right side of the image, is a huge benefit for any data professional that has to troubleshoot performance, or figure out what happened AFTER the fact. For this query, query_id 45, there are two plans, plan_id 45 and plan_id 1882. We can see that one plan, 1882, was used on September 9, 2019, and the other, 45, was used the next day, on September 10, 2019. Had someone complained because performance was abysmal on the 9th, but then just fine on the 10th, we could look at this data and see immediately it was due to a change in plan. If you didn’t have Query Store, how would you know that?

You wouldn’t, unless you had set up a method to capture query plan and execution information, or you had a third-party monitoring tool. On that topic, does Query Store replace the need for third-party monitoring tools? It doesn’t. I don’t believe you need one or the other, I think having access to both only makes your life easier. If you don’t have the budget for a third-party tool, then Query Store is a fantastic option as it’s included in SQL Server, the data is collected within that user database, you control how long the data is kept, and it’s extremely easy to look at query performance using the built-in reports.

3 thoughts on “Why You Need Query Store, Part I: Historical Performance Data”

Hello,

I am new to the query store. However i am interested to find out how to implement query store? Would you suggest enabling it in PROD? What is the potential cost of enabling query store?

I recommend you review my post on Query Store Best Practices: https://www.sqlskills.com/blogs/erin/query-store-best-practices/ That should answer your initial questions.