My last post about nonclustered Columnstore indexes was written back on February 25th, 2012 (Exploring Columnstore Index Metadata, Segment Distribution and Elimination Behaviors). Amazed by how quickly time passes.

Anyhow, this is a quick post on segment population skew based on parallel nonclustered Columnstore index creations.

I’ll use the same 123,695,104 row FactInternetSales table I used almost a year ago to demonstrate. I’ll create the following nonclustered Columnstore index just on one column, to keep things simple:

CREATE NONCLUSTERED COLUMNSTORE INDEX [NCSI_FactInternetSales] ON [dbo].[FactInternetSales] ( [ProductKey] );

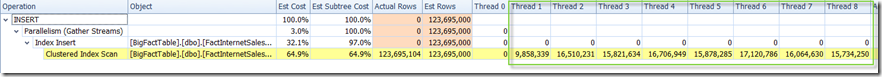

The index takes 31 seconds to create on my laptop and it was created using 8 threads (which I can confirm via the SQL Server execution plan in, this case, SQL Sentry Plan Explorer):

Adding up the actual rows by thread, we get the 123,695,104 row count.

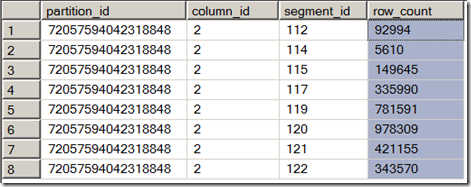

Now if we look at sys.column_store_segments, we can see that the last few segments were populated with less than the maximum 1,048,576 rows:

SELECT [partition_id], [column_id], [segment_id], [row_count] FROM sys.column_store_segments WHERE [row_count] = 1048576 AND [column_id] = 2;

Now the purpose of this short post is to show what happens if we remove parallelism from the overall Columnstore index build (aside from increasing build time and reducing the memory grant):

DROP INDEX [NCSI_FactInternetSales] ON [dbo].[FactInternetSales]; GO CREATE NONCLUSTERED COLUMNSTORE INDEX [NCSI_FactInternetSales] ON [dbo].[FactInternetSales] ( [ProductKey] )WITH (DROP_EXISTING = OFF, MAXDOP = 1); GO

Now instead of running in 31 seconds with 8 schedulers, this serial index build took (not surprisingly) 2 minutes and 10 seconds to build.

How many segments fell beneath the 1,048,576 row count?

This time, just one segment, the last one to be populated. With 117 segments (segment_id 0 through segment_id 117) populated at 1,048,576 per segment, and 123,695,104 rows – our 118th segment has the remaining 1,011,712 rows.

Should the more tightly packed segments provide meaningful performance gains versus the parallel-built, partially filled version? I haven’t tested this yet, but I will at some point. Let me know if you get a chance to do so before I do. My wild guess would be that the benefit would be minor, at best – but as with most things I would like to see for myself.