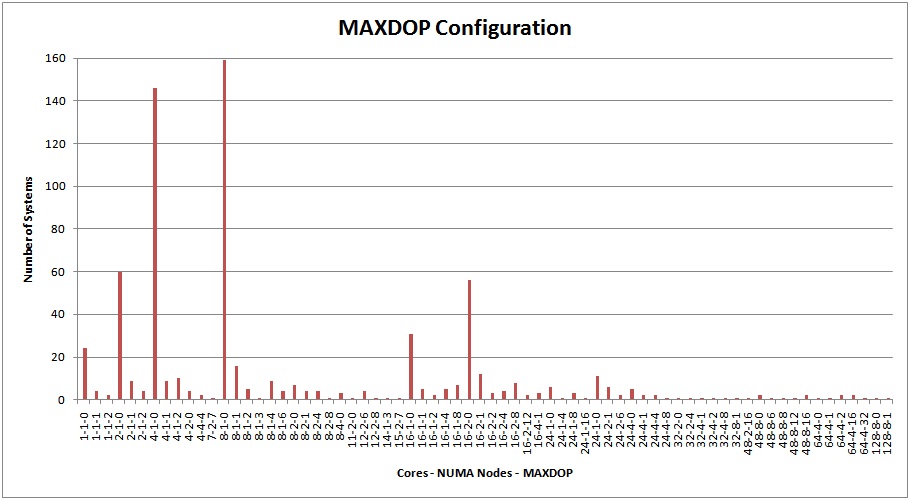

A month ago I kicked off a survey about MAXDOP setting – see here for the survey. I received results for 700 servers around the world! Here they are:

The X-axis format is X-Y-Z, where X = number of cores, Y = number of NUMA nodes, Z = MAXDOP setting. I didn't differentiate between hyper-threading or not, or soft vs. hard NUMA.

Check It Out!

SQLskills Complete Short Course Bundle

$4,575.00 Original price was: $4,575.00.$899.00Current price is: $899.00.

The results are striking – 75% of all systems out there use a server MAXDOP of zero. Now, this doesn't show whether individual queries are using MAXDOP to override that, or resource governor in 2008+ to override that either and I don't have info on the workload for all these servers – so it's not a result we can draw any concrete conclusions from. However, I do find it interesting that such a high proportion are running fine with MAXDOP 0 – my expectation was that there would be a higher proportion of servers with a non-zero MAXDOP setting.

There are quite a few 'black and white' configuration rules out there – for instance:

-

Set MAXDOP to 1 if you're seeing CXPACKET waits as the prevalent wait type.

-

Set MAXDOP to 1 for OLTP systems, and don't do anything else.

-

Old Microsoft guidance to set MAXDOP to half the number of physical processors.

-

Set MAXDOP to the number of cores in the NUMA node.

These are all incorrect as *rules*. There is no one-size-fits-all rule for MAXDOP – there are only general guidelines. For instance:

-

For OLTP systems, it can often be beneficial to set MAXDOP to 1 and then use the MAXDOP query hint to override the server-wide setting for queries that can benefit from parallelism.

-

For mixed-workload systems, you need to be careful how you set MAXDOP so you don't inadvertently penalize one of the workloads. Judicious use of the MAXDOP query hint can help here. A more powerful solution for mixed workloads is to use resource governor and have a workload group for each portion of the workload, with a different MAXDOP for each workload group.

-

For systems with high CXPACKET waits, investigate why this is the case before reducing MAXDOP. It's easy to come up with a demo where there are lots of CXPACKET waits, and while reducing MAXDOP (for the server or the query) reduces the CXPACKET waits, it also makes the query take a lot longer. CXPACKET waits can be because the statistics are incorrect and the query execution system divides up the work by the out-of-date statistics

-

Consider using the cost threshold for parallelism setting – see Jonathan's recent post here.

Using the resource governor as I described above can be a very easy way to mess around with the MAXDOP setting – especially for applications with legacy code that you cannot change, and you don't want to set a server-wide MAXDOP setting. This even works if the legacy code uses MAXDOP query hints, because the resource governor workload group MAXDOP setting *cannot* be overridden.

The key point when making any change for performance tuning is to test the change before putting it into production and work out which setting works best for your workload on your system – rather than blindly following 'this is the best way' rules for settings that people publish.

In other words, what should your MAXDOP be set to? It depends! :-)

One thing to be aware of: if you change the MAXDOP setting for the server, it will flush the plan cache when you do. It shouldn't, but it does. Be careful when doing this on a production server…

Thanks to all those who replied to the original survey!

10 thoughts on “MAXDOP configuration survey results”

Good to know about the plan cache.

We actually change ours twice a day to account for off-hours heavy work vs business-hours user support. It’s been a recent change and has made the off-hours stuff perform much better.

Thank you very much paul…This Post really cleared all my doubts on MAXDOP. read many posts recently by many people.. I have got the info but not as practical…

Thanks once again for the clarifications..

Aditya

Actually, speaking of plan cache. I’ve inherited a system that has a nightly ETL process. I have made some "improvements" to the system by moving some of the work out of SSIS and into SQL stored procedures. I’ve managed to decrease run time by 75%-80%. The problem is I occasionally get processes blocking themselves. Lots and lots of CXPACKET waiting processes all showing taskid the same as the blocking taskid. I’ve found things work if I set the server to a Max DOP of 1 instead of the default 0 and re-run the stored proc. The question is this: am I fixing it because of MAXDOP = 1 or because I’ve reset the plan cache? One of these stored procedures had the "OPTION (MAXDOP 1)" hint but still blocked itself until I set the server Max DOP. And the final complication is that the stored procedure is using a view so I don’t know if setting the hint in the SP actually forces the view to run without parallelism. I suspect it doesn’t since I was still seeing CXPACKET waits.

I’m going to take this to another forum as well but I thought people here might find it interesting.

Thank you for pointing out there is more to MAXDOP than I thought. I was convinced MAXDOP should be 0 unless an application can’t work with it (such as BizTalk Server) as MAXDOP at 0 would improve performance because it could run parallel queries. Seems to me I need to look into MAXDOP further…

Very useful information, thank you. I wanted to ask you, what is exactly happening when maxdop is changed? Well, obviously degree of parallelism. Procedure cache is being cleared as well. Anything else? I’ve been in numerous situations when sql server is practically stuck and unresponsive, just changing the mdop “restarts” it and things start moving again. Hard for me to believe that clearing proc cache, which is usually around 10% of my total buffer size will make such a dramatic difference. is something stuck and waiting before even entering the cache and clearing it allows cache to accept “new” commands?

My guess would be you have a few queries that are executed a huge amount, so if there’s a bad plan it will essentially hang the server. Causing the plan to recompile to a more efficient plan will look like it unhung the server.

Hello Paul, thanks for this blog post. Ours is a DW env with mixed type of workload(80-20 split approx). The server presently runs SQL 2008 with server level maxdop = 2. We are upgrading to SQL 2012 with new h/w. We are getting 60core server with 4 NUMA Nodes * 15 Cores each. I want to test with higher MAXDOP setting, preferrably maxdop=15 (num of cores per NUMA node) so SQL Server can keep processing within a NUMA node. However I see advise from internet that never go higher than MAXDOP=8. Can you please provide your opinion? Thanks.

Impossible to say what is the best for you. You’ll need to test both and see which gives the best results. Also raise the cost threshold for parallelism setting to reduce the amount of unwanted parallelism from small queries.

Ref: https://docs.microsoft.com/en-us/dynamicsax-2012/appuser-itpro/configure-sql-server-and-storage-settings (Configure SQL Server and storage settings [Microsoft Dynamics Ax])

In DBs world we just listened IT DEPENDS; but this is the unique one instance in which regardless of all advancements this document still pointing same value which was once devised in 2008. Kindly any intellectual thoughts on it?

Thanks

If that’s what they recommend, I can’t argue with that, but remember that it’s for a 7-year old product. As always, I’d recommend testing different values and seeing what works best for the particular way you use Dynamics.