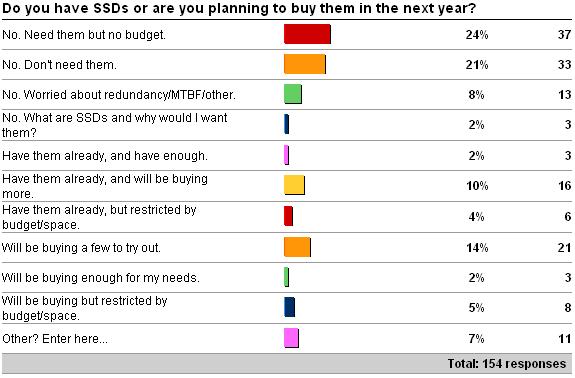

Back at the start of July I kicked off a survey around your plans for SSDs (see here) and now I present the results to you. There's not much to editorialize here, but the numbers are interesting to see.

The "other" answers were (verbatim):

-

3 x 'have bought and am trying them out'

-

3 x 'not sure if we need them or not'

-

2 x 'all production servers are hosted'

-

1 x 'bought them, tried them..not good enough yet for tempdb'

-

1 x 'Have some, want more, could you really every have enough?'

-

1 x 'We get every penny from or spinning media, and have no need for SSD'

The results reflect what I've been hearing when teaching classes and talking to customers/conference attendees over the last six months. People are becoming more interested in SSDs but there's still a lot of wariness about them and of course the whole money issue of being able to buy them. I'm also not surprised (given the general readership demographics of this blog) by the number of people who've analyzed their IOPS requirements and concluded that they don't need SSDs to accomplish that.

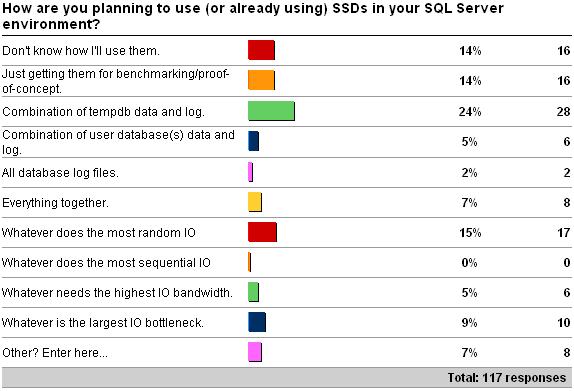

The "other" answers were (verbatim):

-

3 x 'not in the budget'

-

1 x 'I plan to buy expensive drives and throw them at you, paul! love, conor'

-

1 x 'I'm going to do the same thing Conor will do. Denny'

-

1 x 'OLAP Scale Out'

-

1 x 'Use them as cache'

-

1 x 'Using in an EMC V-MAX SAN to dynamically move high workloads to SSD temporarily'

Ahem – thanks Conor and Denny :-)

Another unsurprising set of results that reflects what I've been hearing. One number I'd be interested in drilling deeper into is answer #3 – are people putting/planning to put tempdb on SSDs because that's what they've heard is the best thing to do, or because tempdb truly is the largest I/O bottleneck that can benefit the most from SSDs? That's a set of experiments I'd like to try out with my Fusion-io drives.

The final "other" answer is also interesting – I was talking to a couple of folks from EMC in Ireland about the V-MAX when we were there earlier this month. Very cool idea to migrate data up and down a set of devices with varying latencies (at the block level, not the file level) – I'd like to see more on how the technology copes with one-off operations like consistency checks or backups – do those IOs affect which layer a block resides in?

Anyway, hope you find these results interesting.

Thanks to all those who responded!

5 thoughts on “Survey results around purchase and use of SSDs”

We deployed tempdb on SSD because tempdb was 50% of all the IO on our two biggest boxes. As with any other recommendation testing and measuring is key.

Paul,

The tech in the EMC V-MAX is called FAST (I don’t remember what it stands for). FAST is coming to the CLARiiON line of Arrays later this year. Once I’ve got it deployed (not sure if there is an extra license that I’ll have to pay for it or not) I’ll let you know. As I understand there are supposed to be settings that can be tuned to tell the array how often a block needs to be touched before it’ll be moved up or down the disk levels.

Denny

My company’s shopping for an entry-level SAN and recently got a pitch from Compellent. They’re already shipping an "automatic tiering" capability that migrates block-level data between disk types automatically. I don’t know the details of the heuristics, but they described a setup with a few SSDs "up front", 15K drives at tier-2, and cheap, slow, RAID-5 for the infrequently touched data. I’m not sure my environment is big enough to gain much by it, but it sure sounded cool.

We just tried a few of the brand x SSDs in RAID 10 on brand y RAID controller under ESXi 4. Under load the result was total database corruption. I advise much caution.

We are working on deploying them for two solutions, one is tempdb as it is approximately 25% of the write workload and 10% of the overall read workload. Granted not as high as it could be but still an impact. The other solution that we are looking at is a database that we have periodic massive write spikes on that really affects other SAN related activity where there is a shared controller. The big caveat with anything other than tempdb is clustering so, still trying to work through the nuances associated with that for this database. :( Certainly having the tiered shared storage presentation of SSD’s is the way to go. Unfortunately that is not in our budget. :)