This post is a continuation of the SQL Server 2014 Cardinality Estimator enhancements exploration series:

- A first look at the query_optimizer_estimate_cardinality XE event

- “CSelCalcCombineFilters_ExponentialBackoff” Calculator

- “CSelCalcCombineFilters_ExponentialBackoff” Calculator– Part II

- The CSelCalcAscendingKeyFilter Calculator

- Cardinality Estimation Model Version

In the previous post I showed you how to start using the new SQL Server 2014 CE model, but what different does it actually make?

Of course your own mileage may vary (and you should expect it to), but I thought I would kick the tires a bit by comparing root-level cardinality estimate skews for a specific workload against the AdventureWorks2008R2 database. I used Jonathan’s “AdventureWorks BOL Workload.sql” script that he put together for use in the context of The AdventureWorks2008R2 Books Online Random Workload Generator (I made a few minor adjustments – for example, removing the queries that referenced the deprecated COMPUTE).

- I first cleared the cache of the (test) SQL Server 2014 CTP2 instance and then ran the script against the AdventureWorks2012 database using a pre-SQL Server 2014 database compatibility level (100).

- I then collected the root-level cardinality skew information and then re-ran the test using the SQL Server 2014 database compatibility level (120) and again collected the root-level cardinality skew information for that test as well.

The query I used for extracting estimated vs. actual last rows was as follows (for both tests):

SELECT s.[query_hash],

p.[query_plan].value('(//@EstimateRows)[1]', 'varchar(128)') AS [estimated_rows],

s.[last_rows]

FROM sys.[dm_exec_query_stats] AS [s]

CROSS APPLY sys.[dm_exec_sql_text](sql_handle) AS [t]

CROSS APPLY sys.[dm_exec_query_plan](plan_handle) AS [p]

WHERE DATEDIFF(mi, s.[last_execution_time], GETDATE()) < 1

GO

Of course, root level cardinality skews don’t really give us the full picture (see my post Detecting Cardinality Estimate Issues with sys.dm_exec_query_stats), especially for significant skews that are in the leaf-level or intermediate levels of the plan that work themselves out by the time they reach the root. But this informal test was a way for me to get a general feel for changes across several statements in a workload.

Now for these tests, I had query_hash values that would appear for the old-CE and not the new-CE and for the new-CE and not the old-CE. Rather than run down that bunny trail (background requests, for example), I just focused for the time being on those query_hash values that joined consistently across each test run.

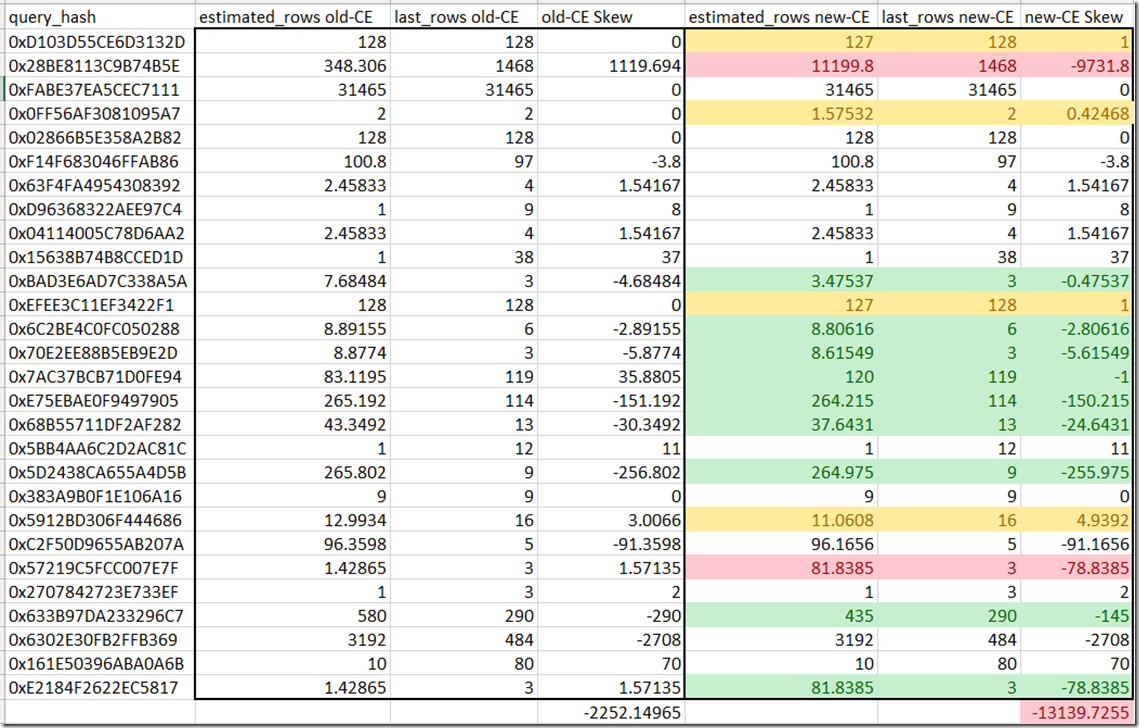

Here is what I saw – adding a bit of color to the noteworthy changes of old-CE vs. new-CE.

Now with regards to one round of informal testing I saw:

- 9 root-level estimates improve (some slightly, some more significant)

- 3 root-level estimates degrade slightly

- 2 root-level estimates skew “more than slightly”

- 13 plans remained the same

How consistent were the results across tests? It was repeatable. I did three test rounds where the skew was identical across workloads for the new CE and and for the old – as I would expect (and ruling out any rogue background queries jumping in during my test or new query hash values – there were 28 query_hash values I could keep track of).

Now again, this is just root-level skew. Quite a bit can be buried in the leaf and intermediate levels of the plan, so I’ll be exploring changes there as well, but I thought this would be an interesting first cut at the overall workload estimate changes.

More to come…