While it’s good to keep an eye on your SQL Server instances – it’s a balancing act. On one side you want to be sure that you are monitoring critical areas and pulling enough information to perform root cause analysis on anything out-of-the-norm. But on the other side, if you pull an excessive amount of information (or redundant information), your monitoring could end up being the cause of the problems you were looking to prevent.

Let’s get specific.

Imagine your HR department has an application that executes the following query several times per minute:

SELECT JobCandidateID, [Edu.Level], [Edu.StartDate], [Edu.EndDate], [Edu.Degree],[Edu.Major], [Edu.Minor], [Edu.GPA], [Edu.GPAScale], [Edu.School], [Edu.Loc.CountryRegion], [Edu.Loc.State], [Edu.Loc.City]

FROM HumanResources.vJobCandidateEducation;

This is a simple, but important query for the department, and typically the query finishes executing in 10 milliseconds on the production system.

Now imagine that you get a call from the HR department that all queries seem to be running slowly. They send you the aforementioned query and you execute it, seeing that indeed it is running in 31 milliseconds instead of the normal 10 milliseconds.

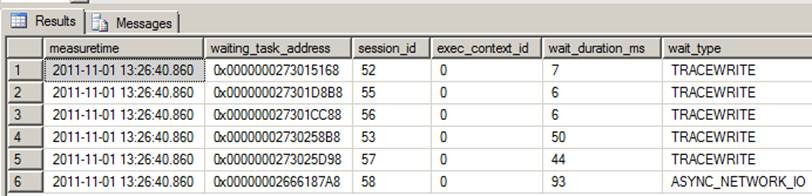

The HR team is still getting work done, just more slowly now. Since workloads were still coming through, you decide to check out what the tasks are waiting on by querying sys.dm_os_waiting_tasks. Right away you see something unusual:

There are five waiting requests on TRACEWRITE and one on ASYNC_NETWORK_IO.

You saw this wait type before related to SQL Trace – but why would there be five of these wait types across different sessions?

Perhaps you have multiple DBA and Developers in your environment with the permission to launch SQL Profiler traces. Even though the intention was good, by virtue of what events you are monitoring and the throughput of activity on the monitored SQL Server instance, you could be hurting the performance of the SQL Server instance. Another source of these waits could be spawned from third party monitoring software packages (again with good intentions) – and for whatever reason something was misconfigured or there is redundant tracing due to lack of communication or centralized documentation.

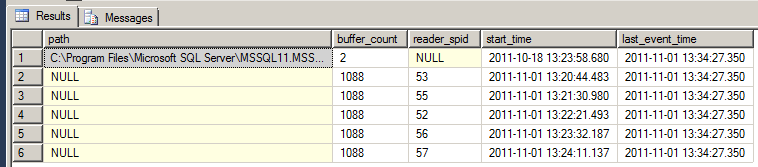

So to wrap up this story, you decide to query sys.traces and find the reader_spid for each trace running against the SQL Server instance:

SELECT path, buffer_count, reader_spid, start_time, last_event_time

FROM sys.traces

This returns:

You already saw the waiting session ids in sys.dm_os_waiting_tasks – but now you know this is trace related having been surfaced in sys.traces. You look up the spid connection information in other DMVs and you notify them about the issue and have them shut down the traces. After doing this, you see the TRACEWRITE waiting tasks disappear and you retest the original query, finding that it has returned down to 10 ms.

As a postscript, one of the virtues of Extended Events versus SQL Profiler is that Extended Events are significantly more lightweight. And while I haven’t tested the overhead of excessive tracing using Extended Events, I imagine that as people move to this method, they will need to work a little harder to cause “observer overhead” problems like this – but I’m sure people will find a way.

** Oh – and a post-postscript. If you have a chance, don't take my word for it, test this out (in a test environment) for yourself. Pick a query and measure the "before" performance via SET STATISTICS TIME. Get a few samplings and make note of your average. Then spin up multiple SQL Profiler sessions. Prior to the blog, 5 sessions did the trick, with varying overlapping events being tracked. Then measure the SET STATISTICS TIME and compare. Your impact will vary based on your specifications. **

3 thoughts on “Observer overhead – the perils of too much tracing”

Adding a comment to my own post – but just to call out a good distinction that Aaron Betrand made in case anyone walked away with a similar assumption: While the TRACEWRITE helped point to the root cause (heavy tracing) – this isn’t to be confused with the waits being the cause itself. The wait types help orient you in troubleshooting a case like this.

I kinda wanted to know who exactly was doing what,so I added a few simple things:

SELECT

s.host_name,

s.program_name,

t.path,

t.buffer_count,

t.reader_spid,

t.start_time,

t.last_event_time ,

s.client_interface_name,

r.command,

s.login_name,

s.nt_user_name

FROM sys.traces t

left outer join

sys.dm_exec_sessions s

on t.reader_spid = s.session_id

left outer join

sys.dm_exec_requests r

on t.reader_spid = r.session_id

Thanks Amanda!

Comments are closed.