As I have said previously in this series, one of my favorite aspects of Extended Events is that it allows you to look at what is going on under the covers in SQL Server, at a level that has never previously been possible. SQL Server Denali CTP1 includes a number of new Events that expand on the information that we can learn about how SQL Server operates and in today’s blog post we’ll look at how we can use those Events to look at what happens when a database starts up inside of SQL Server.

First lets create our Event Session, which will collect a large number of events that relate to the operations that occur when a database starts in SQL Server.

DECLARE @sqlcmd nvarchar(max) = 'IF EXISTS(SELECT * FROM sys.server_event_sessions WHERE name=''MonitorStartupLogRecovery'') DROP EVENT SESSION [MonitorStartupLogRecovery] ON SERVER; CREATE EVENT SESSION [MonitorStartupLogRecovery] ON SERVER ADD EVENT sqlserver.database_started (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.databases_log_file_used_size_changed (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.databases_log_flush (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.databases_log_flush_wait (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.file_read (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.file_read_completed (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.file_write_completed (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.file_written (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.log_block_cache (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.log_block_consume (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.log_blocks_uncache (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.log_cache_buffer_refcounter_change (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.log_consumer_act (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.log_flush_complete (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.log_flush_requested (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.log_flush_start (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.log_single_record (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.new_log_interest_flip (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.redo_single_record (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.redo_target_set (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')), ADD EVENT sqlserver.transaction_log (WHERE (database_id = ' + CAST(DB_ID('AdventureWorks2008R2') AS varchar(3)) + ')) ADD TARGET package0.asynchronous_file_target( SET filename=''C:\SQLBlog\MonitorStartupLogRecovery.xel'', metadatafile=''C:\SQLBlog\MonitorStartupLogRecovery.xem'') WITH (MAX_MEMORY = 8192KB, EVENT_RETENTION_MODE = ALLOW_SINGLE_EVENT_LOSS, STARTUP_STATE = ON)' EXEC(@sqlcmd) GO

With the Event Session created, we can make some changes that write to our test database to see what happens when the database is recovered at startup. We are going to can make two changes to the database. First we’ll begin a transaction and create a table with 10 rows of data in it without committing the transaction.

Check It Out!

SQLskills Complete Short Course Bundle

$4,575.00 Original price was: $4,575.00.$899.00Current price is: $899.00.

USE AdventureWorks2008R2 GO -- Begin a Transaction and leave it open BEGIN TRANSACTION -- Create the First Table SELECT TOP 10 * INTO TestTable FROM Sales.SalesOrderDetail sod GO

Now in a New Query Window, we’ll create a second table with 10 rows of data without opening a transaction, and then force a dirty shutdown of the Database Engine.

USE AdventureWorks2008R2 GO -- Create a Second Table SELECT TOP 10 * INTO TestTable2 FROM Sales.SalesOrderDetail sod GO -- Flush changes to data file CHECKPOINT GO -- Force Shutdown the Engine SHUTDOWN

Once SHUTDOWN is issued, the process terminates, and the Database Engine will need to be restarted from the Services Snapin, the SQL Server Configuration Manager, or through SSMS. When the Engine starts up, the Event Session will become active, and the Events will be logged to the package0.asynchronous_file_target for analysis. Once recovery completes, we can drop the Event Session from the Server, so that the buffers flush, and we can then begin our analysis of the collected information.

USE tempdb GO -- Read the Raw Event data into a table SELECT CAST(event_data AS XML) AS event_data INTO TargetEvents FROM sys.fn_xe_file_target_read_file('C:\SQLBlog\MonitorStartupLogRecovery*.xel', 'C:\SQLBlog\MonitorStartupLogRecovery*.xem', null, null) -- Fetch the Event Data from the Raw Event Data into another table SELECT event_data.value('(event/@name)[1]', 'varchar(50)') AS event_name, DATEADD(hh, DATEDIFF(hh, GETUTCDATE(), CURRENT_TIMESTAMP), event_data.value('(event/@timestamp)[1]', 'datetime2')) AS [timestamp], COALESCE(event_data.value('(event/data[@name="database_id"]/value)[1]', 'int'), event_data.value('(event/action[@name="database_id"]/value)[1]', 'int')) AS database_id, event_data.value('(event/data[@name="count"]/value)[1]', 'bigint') AS [count], event_data.value('(event/data[@name="start_log_block_id"]/value)[1]', 'bigint') AS [start_log_block_id], event_data.value('(event/data[@name="is_read_ahead"]/value)[1]', 'nvarchar(4000)') AS [is_read_ahead], event_data.value('(event/data[@name="private_consumer_id"]/value)[1]', 'bigint') AS [private_consumer_id], event_data.value('(event/data[@name="mode"]/text)[1]', 'nvarchar(4000)') AS [mode], event_data.value('(event/data[@name="file_handle"]/value)[1]', 'nvarchar(4000)') AS [file_handle], event_data.value('(event/data[@name="offset"]/value)[1]', 'bigint') AS [offset], event_data.value('(event/data[@name="file_id"]/value)[1]', 'int') AS [file_id], event_data.value('(event/data[@name="filegroup_id"]/value)[1]', 'int') AS [filegroup_id], event_data.value('(event/data[@name="size"]/value)[1]', 'bigint') AS [size], event_data.value('(event/data[@name="path"]/value)[1]', 'nvarchar(4000)') AS [path], event_data.value('(event/data[@name="duration"]/value)[1]', 'bigint') AS [duration], event_data.value('(event/data[@name="io_data"]/value)[1]', 'nvarchar(4000)') AS [io_data], event_data.value('(event/data[@name="resource_type"]/text)[1]', 'nvarchar(4000)') AS [resource_type], event_data.value('(event/data[@name="owner_type"]/text)[1]', 'nvarchar(4000)') AS [owner_type], event_data.value('(event/data[@name="transaction_id"]/value)[1]', 'bigint') AS [transaction_id], event_data.value('(event/data[@name="lockspace_workspace_id"]/value)[1]', 'nvarchar(4000)') AS [lockspace_workspace_id], event_data.value('(event/data[@name="lockspace_sub_id"]/value)[1]', 'int') AS [lockspace_sub_id], event_data.value('(event/data[@name="lockspace_nest_id"]/value)[1]', 'int') AS [lockspace_nest_id], event_data.value('(event/data[@name="resource_0"]/value)[1]', 'int') AS [resource_0], event_data.value('(event/data[@name="resource_1"]/value)[1]', 'int') AS [resource_1], event_data.value('(event/data[@name="resource_2"]/value)[1]', 'int') AS [resource_2], event_data.value('(event/data[@name="object_id"]/value)[1]', 'int') AS [object_id], event_data.value('(event/data[@name="associated_object_id"]/value)[1]', 'bigint') AS [associated_object_id], event_data.value('(event/data[@name="resource_description"]/value)[1]', 'nvarchar(4000)') AS [resource_description], event_data.value('(event/data[@name="database_name"]/value)[1]', 'nvarchar(4000)') AS [database_name], event_data.value('(event/data[@name="log_block_id"]/value)[1]', 'bigint') AS [log_block_id], event_data.value('(event/data[@name="log_block_size"]/value)[1]', 'int') AS [log_block_size], event_data.value('(event/data[@name="from_disk"]/value)[1]', 'nvarchar(4000)') AS [from_disk], event_data.value('(event/data[@name="incomplete"]/value)[1]', 'nvarchar(4000)') AS [incomplete], event_data.value('(event/data[@name="cache_buffer_pointer"]/value)[1]', 'nvarchar(4000)') AS [cache_buffer_pointer], event_data.value('(event/data[@name="consumer_id"]/value)[1]', 'bigint') AS [consumer_id], event_data.value('(event/data[@name="old_weight"]/value)[1]', 'int') AS [old_weight], event_data.value('(event/data[@name="new_weight"]/value)[1]', 'int') AS [new_weight], event_data.value('(event/data[@name="new_position"]/value)[1]', 'int') AS [new_position], event_data.value('(event/data[@name="last_log_block_id"]/value)[1]', 'bigint') AS [last_log_block_id], event_data.value('(event/data[@name="weight"]/value)[1]', 'int') AS [weight], event_data.value('(event/data[@name="address"]/value)[1]', 'nvarchar(4000)') AS [address], event_data.value('(event/data[@name="type"]/text)[1]', 'nvarchar(4000)') AS [type], event_data.value('(event/data[@name="current_count"]/value)[1]', 'int') AS [current_count], event_data.value('(event/data[@name="change_type"]/value)[1]', 'int') AS [change_type], event_data.value('(event/data[@name="activity_id"]/value)[1]', 'int') AS [activity_id], event_data.value('(event/data[@name="write_size"]/value)[1]', 'int') AS [write_size], event_data.value('(event/data[@name="rows"]/value)[1]', 'int') AS [rows], event_data.value('(event/data[@name="pending_writes"]/value)[1]', 'int') AS [pending_writes], event_data.value('(event/data[@name="pending_bytes"]/value)[1]', 'int') AS [pending_bytes], event_data.value('(event/data[@name="reason"]/text)[1]', 'nvarchar(4000)') AS [reason], event_data.value('(event/data[@name="waiters"]/value)[1]', 'int') AS [waiters], event_data.value('(event/data[@name="error"]/value)[1]', 'int') AS [error], event_data.value('(event/data[@name="slot_id"]/value)[1]', 'int') AS [slot_id], event_data.value('(event/data[@name="used_size"]/value)[1]', 'int') AS [used_size], event_data.value('(event/data[@name="reservation_size"]/value)[1]', 'bigint') AS [reservation_size], event_data.value('(event/data[@name="log_op_id"]/value)[1]', 'int') AS [log_op_id], event_data.value('(event/data[@name="log_op_name"]/value)[1]', 'nvarchar(4000)') AS [log_op_name], event_data.value('(event/data[@name="interest"]/value)[1]', 'nvarchar(4000)') AS [interest], event_data.value('(event/data[@name="cache_type"]/value)[1]', 'int') AS [cache_type], event_data.value('(event/data[@name="keys"]/value)[1]', 'nvarchar(4000)') AS [keys], event_data.value('(event/data[@name="stop_mark"]/value)[1]', 'nvarchar(4000)') AS [stop_mark], event_data.value('(event/data[@name="operation"]/text)[1]', 'nvarchar(4000)') AS [operation], event_data.value('(event/data[@name="success"]/value)[1]', 'nvarchar(4000)') AS [success], event_data.value('(event/data[@name="index_id"]/value)[1]', 'int') AS [index_id], event_data.value('(event/data[@name="log_record_size"]/value)[1]', 'int') AS [log_record_size], event_data.value('(event/data[@name="context"]/text)[1]', 'nvarchar(4000)') AS [context], event_data.value('(event/data[@name="replication_command"]/value)[1]', 'int') AS [replication_command], event_data.value('(event/data[@name="transaction_start_time"]/value)[1]', 'nvarchar(4000)') AS [transaction_start_time] INTO Results FROM TargetEvents

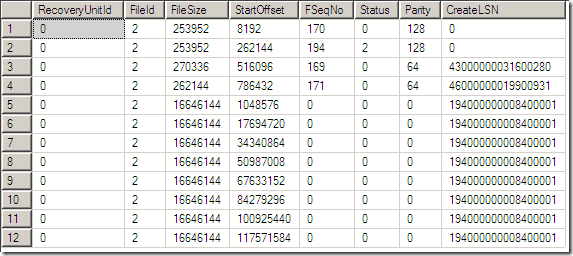

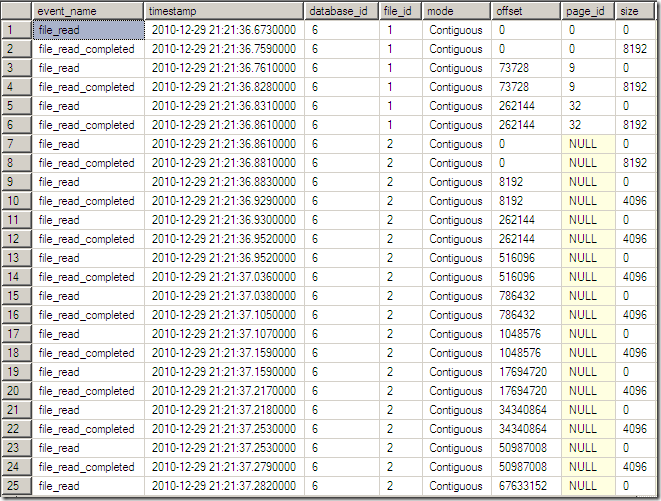

Now we can begin to analyze the information that we collected by querying the Results table. Looking at the Results as a whole, we can see the database opened by reading the first page of the database and then the database boot page (page_id=9) and page 32 of the primary data file. Then the engine scans each of the VLF’s of the transaction log. We can tell that the log reads are scans of the VLF’s by looking at the DBCC LOGINFO information for the database and comparing the file_read offsets from the Event Session to the StartOffset of each of the VLF’s in the DBCC LOGINFO output.

DBCC LOGINFO

SELECT event_name, timestamp, database_id, file_id, mode, offset, CASE WHEN file_id = 1 THEN offset/8192 ELSE NULL END AS page_id, size, log_block_id, log_block_size, start_log_block_id, last_log_block_id, from_disk, consumer_id, activity_id, log_op_id, log_op_name, change_type, operation, object_id, index_id, log_record_size, slot_id, used_size, reservation_size, write_size, rows, pending_writes, pending_bytes, context, waiters FROM Results

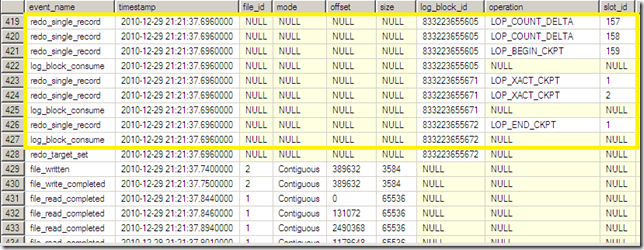

After the startup scans the VLF’s 120K of information is read from the log file, and the log buffers start to be consumed to determine the redo start point for recovery. We can filter our Event data to remove Events that while interesting are not necessary for our analysis at the moment, as well as to reduce the number of columns being returned from the data set.

SELECT event_name, timestamp, file_id, mode, offset, size, log_block_id, COALESCE(log_op_name, operation) as [operation], slot_id, object_id, index_id, log_record_size, context, write_size, rows FROM Results WHERE event_name NOT IN ('log_consumer_act', 'log_single_record', 'log_cache_buffer_refcounter_change', 'file_read')

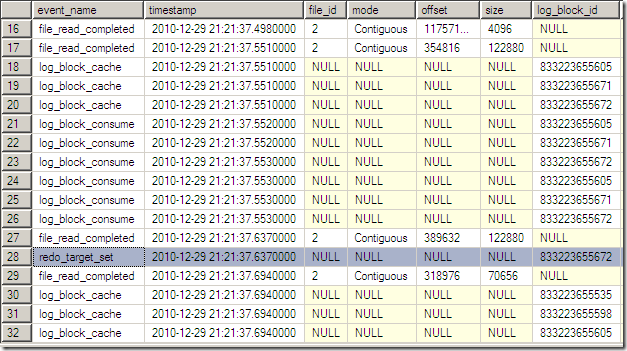

With the filtered results, we can see the log reads into cache and the setting of the redo target. If we scroll down further, we can get a better picture of what is happening.

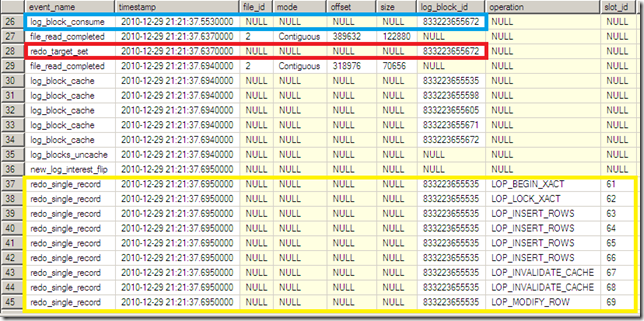

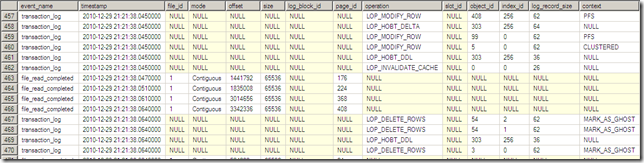

The last active log blog was consumed, and the redo target was set at that log_block_id. Then the log is reread starting at offset 318976 and the blocks get cached and the redo operations begin against the database. Scrolling through the results further, we can see that the redo operations continue as the log blocks increase up to the Checkpoint operation that was executed immediately before the Shutdown of the instance occurred, at the log block that was set as the redo target originally.

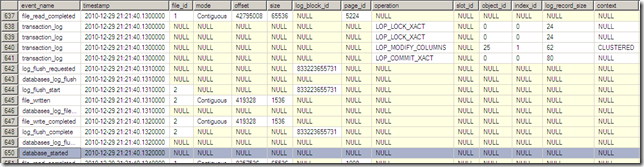

At this point the data file begins to be read so that the undo operations can be performed before making the database available.

After the undo completes the database_started Event is raised and that database becomes available for use.

To validate that the changes we see occurring after the redo operations and before the database_started Event, we can set the database OFFLINE, recreate our Event Session, and then bring the database back ONLINE again, and then compare the logged Events when no changes have occurred to our original Events when known changes have occurred. I am not going to do that in this blog post, but will instead leave that up to the reader to investigate on their own.

One thought on “An XEvent a Day (29 of 31) – The Future – Looking at Database Startup in Denali”

Hi Jonathan,

Are modifications to the data pages that happen as part of REDO process again logged into transaction log or not?

How the redo activity will be tracked by SQL Server if machine crashes again in the middle of a redo?

Thanks.