This only demonstrates that the performance impact is the result of AT TIME ZONE. To see how to work across date ranges read my follow up post: More About AT TIME ZONE.

This is not the type of blog post that I enjoy writing. First let me start off by saying this is not a bash against Microsoft, the SQL Server team, or anything other than an informative post to let people know that there is a potential performance limitation in a very useful feature of SQL Server 2016 and higher. The AT TIME ZONE syntax was added in SQL Server 2016 to handle changing datetime values from one time zone to the offset of a different time zone using string names for the time zone. This is a great feature that simplifies converting datetime values but there is an unfortunate draw back to the implementation; it relies on the time zones that are stored in the Windows Registry and therefore has to make calls out to Windows which unfortunately occurs row-by-row for large result sets when used inside of a query.

I have run into this issue twice now in customer systems and it is something that has a significant impact to query performance. Microsoft is aware of the problem, but a fix is not immediately on the horizon as far as I know today. However, there are a couple of workarounds that can be used to achieve the same result with fast performance, just not using the newer syntax. I initially ran into this on premise with SQL Server 2019, but it also affects Synapse in Azure as well.

Check It Out!

SQLskills Complete Short Course Bundle

$4,575.00 Original price was: $4,575.00.$899.00Current price is: $899.00.

Example Queries

For the performance impact to show up, you have to be querying against a relatively large size data set. I am going to use AdventureWorks in this post, and you can use the script on my blog post Enlarging the AdventureWorks Sample Databases to create a larger version for testing. The impact is measurable even on the smaller SalesOrderHeader table that ships with AdventureWorks, but it’s not nearly as painful with only 32K rows of data. Here is the example query we are going to use against the SalesOrderHeaderEnlarged table I’ve used for years with 9.8 million rows of data, which still isn’t that much.

SELECT OrderDate AT TIME ZONE 'Pacific Standard Time' AS OrderDate, COUNT(SalesOrderID) AS OrderCount FROM [AdventureWorks2014].[Sales].[SalesOrderHeaderEnlarged] GROUP BY OrderDate AT TIME ZONE 'Pacific Standard Time';

SQL Server Execution Times:

CPU time = 221500 ms, elapsed time = 227653 ms.

This query takes a whopping 3 minutes and 41 seconds to run on my 8 core system with 8GB RAM dedicated to SQL Server, when the entire data set exists in the buffer pool already. The first system where I found AT TIME ZONE to be a performance inhibitor, I was working with a data set that was in the 3+ billion rows per day size range that was stored in a clustered columnstore, and the query that was executing would literally spin for days, and live query stats showed that it was processing rows but at a painfully slow rate. I was almost ready to give up and accept defeat on tuning this process when I decided to drop out of SQL Server and use xperf to capture a latency stackwalk while running the query in isolation on a development machine to see why in the world this was running so incredibly slow.

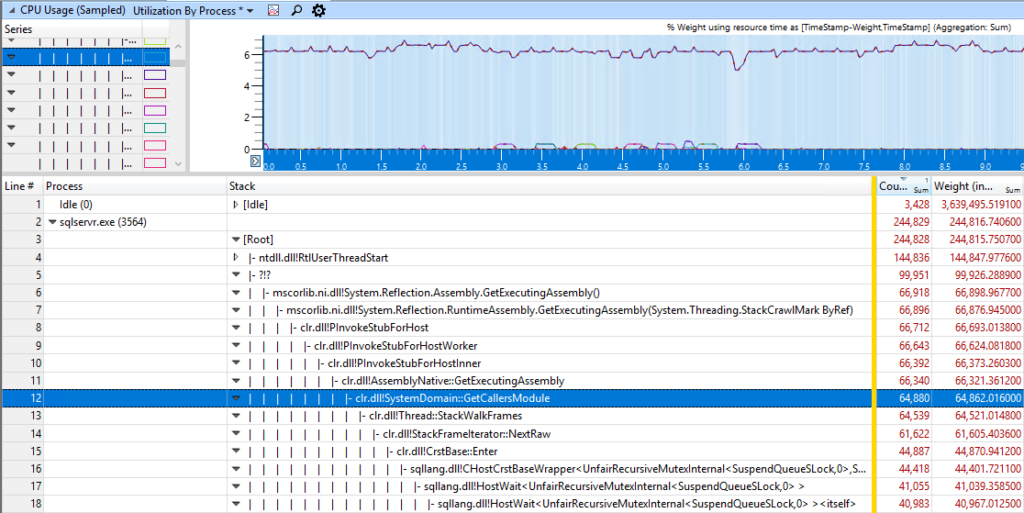

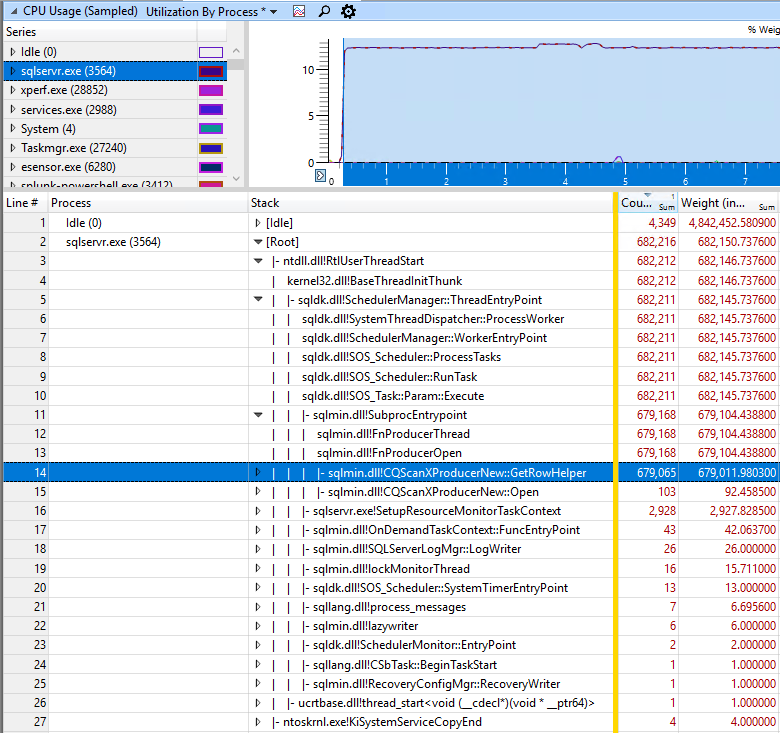

Here you can see that the CPU time is going to an external CLR call, so I started looking at what in the code from TRY_PARSE(), to CONCAT(), etc could be the cause of it and methodically eliminated things from the code one at a time testing to see what was the culprit. It turned out to be the implementation of AT TIME ZONE, which calls external to Windows for the conversion to include DST information maintained by the OS already instead of SQL Server having to maintain logic for DST conversions and be updated anytime someplace changes their rules for DST. After removing the AT TIME ZONE references (there happened to be 15 of them in the process) and just using datetimes as provided by data instead, the run time dropped to under 30 minutes for a process that I would literally start on Monday morning and check in on at the end of Tuesday before leaving work to see where it was at. The xperf stackwalk without AT TIME ZONE is below, and shows that it is able to consume rows much faster.

Working Around the Issue

Since I still needed to have the datetime values converted to the appropriate time zone following daylight savings time rules, I tried a couple of different things. First, in the documentation for AT TIME ZONE, it calls out that there is a system object sys.time_zone_info in SQL Server that outputs the information stored in the Windows Registry. So I tried rewriting the relevant code sections using AT TIME ZONE to instead do a CROSS JOIN to this view similar to the following code:

SELECT DATEADD(hh, hour_offset, DATEADD(mi, minute_offset, OrderDate)) AS OrderDate, COUNT(SalesOrderID) AS OrderCount FROM [AdventureWorks2014].[Sales].[SalesOrderHeaderEnlarged] CROSS JOIN ( SELECT CAST(PARSENAME(REPLACE(current_utc_offset, ':', '.'), 1) AS INT) AS minute_offset, CAST(PARSENAME(REPLACE(current_utc_offset, ':', '.'), 2) AS INT) AS hour_offset FROM sys.time_zone_info AS tzi WHERE tzi.name = 'Pacific Standard Time' ) AS t GROUP BY DATEADD(hh, hour_offset, DATEADD(mi, minute_offset, OrderDate));

SQL Server Execution Times:

CPU time = 72828 ms, elapsed time = 72834 ms.

We are doing better, but this still has a run time of 1 minute and nearly 13 seconds. So I decided to try creating local variables of the hour and minute offsets required and just using those in the query:

DECLARE @minute_offset INT, @hour_offset INT; SELECT @minute_offset = CAST(PARSENAME(REPLACE(current_utc_offset, ':', '.'), 1) AS INT), @hour_offset = CAST(PARSENAME(REPLACE(current_utc_offset, ':', '.'), 2) AS INT) FROM sys.time_zone_info AS tzi WHERE tzi.name = 'Pacific Standard Time'; SELECT DATEADD(hh, @hour_offset, DATEADD(mi, @minute_offset, OrderDate)) AS OrderDate, COUNT(SalesOrderID) AS OrderCount FROM [AdventureWorks2014].[Sales].[SalesOrderHeaderEnlarged] GROUP BY DATEADD(hh, @hour_offset, DATEADD(mi, @minute_offset, OrderDate));

SQL Server Execution Times:

CPU time = 4515 ms, elapsed time = 4504 ms.

EUREKA! Now we are cooking with fire! We only pay the expense of going out to the OS and CLR once, and now SQL Server knows how to do math in a set based manner so it doesn’t have to run row-by-row executing the query, and as you can see performance is significantly better at 4 and a half seconds.

HOWEVER, THIS IS NOT THE CORRECT WAY TO DO THINGS ACROSS DATE RANGES! This only demonstrates that the performance impact is the result of AT TIME ZONE. To see how to work across date ranges read my follow up post: More About AT TIME ZONE.

Takeaways

Troubleshooting performance problems can sometimes involve going outside the normal things we would look at to determine why something is running slowly. I’ve used XPerf a few times in client engagements to collect low level latency stack walk data to figure out what’s happening under the covers, but it’s not common. The SQL Server team at Microsoft is aware of the performance impact of AT TIME ZONE, but the design change required to fix the implementation is not trivial. The decision to rely on Windows to get daylight savings time information was likely made because it’s already available there and it is updated when a location decides to change whether or not to follow daylight savings time. To me, this isn’t a huge deal, there is an easy way to work around the performance issue that exists, but it can be a pain trying to diagnose what’s happening with a query that otherwise doesn’t provide much in the way of performance tuning.

5 thoughts on “AT TIME ZONE Performance Issues”

Extra Points for the xperf usage!

Most DBAs (Developers) need to know about this tool

I’ve been seeing this issue in Azure SQL Database on data with hundreds of millions of rows recently however each row could be from a different time zone. Also, what do you do if certain rows are before the switch to daylight savings time, and certain rows are after the switch to DST?

While your solution resolves the performance issue, it also introduces inaccurate times when the date range queried crosses the daylight savings barriers. Getting this right is not easy. The beginning and end of daylight savings is decided country by country. In addition, a country may decided to change the daylight savings start and end. This happened in 2007 in the US. Based on the number of time zone updates Microsoft releases for Windows, it appears to be a regular occurrence around the world.