In my last post: Little Bobby Tables, SQL Injection and EXECUTE AS, I wanted to highlight how to avoid SQL Injection when using EXEC. A few people brought up the point that I could have avoided SQL Injection by using sp_executesql. And, while sp_executesql can be used in some cases, it’s not always the most ideal from a performance perspective. So, to really show this, I’m going to start with focusing on the similarities and differences of EXEC and sp_executesql. I’ll start here with some performance details but you’ll find that I’m going to just hit the tip of the iceberg with this one. I’ll definitely need another post or two!

First, a quick overview:

sp_executesql (also known as “Forced Statement Caching”)

Check It Out!

SQLskills Complete Short Course Bundle

$4,575.00 Original price was: $4,575.00.$899.00Current price is: $899.00.

- Allows for statements to be parameterized.

- Only allows parameters where SQL Server would normally allow parameters; however, this string can be built using forms of dynamic constructs. I’ll give more details on this in additional posts.

- Has strongly typed variables/parameters – and this can reduce injection and offer some performance benefits!

- Creates a plan on first execution (similar to stored procedures) and subsequent executions reuse this plan

EXEC (also known as “Dynamic String Execution” or DSE)

- Allows *any* construct to be built.

- Treats the statement similarly to an adhoc statement. This means that the statement goes through the same process that adHoc statements do – they are parsed, probably parameterized and possibly deemed “safe” for subsequent executions to re-use.

- Does not have strongly typed parameters in the adhoc statement and therefore can cause problems when the statements are executed (I have ways around this.)

- Does not force a plan to be cached.

- This can be a pro in that SQL Server can create a plan for each execution.

- This can be a con in that SQL Server needs to recompile/optimize for each execution.

Let’s start with using sp_executesql to parameterize a query where SQL Server would also allow parameters:

DECLARE @ExecStr NVARCHAR(4000);

SELECT @ExecStr = ‘SELECT * FROM dbo.member WHERE lastname LIKE @lastname’;

EXEC sp_executesql @ExecStr, N’@lastname varchar(15)’, ‘Tripp’;

GO

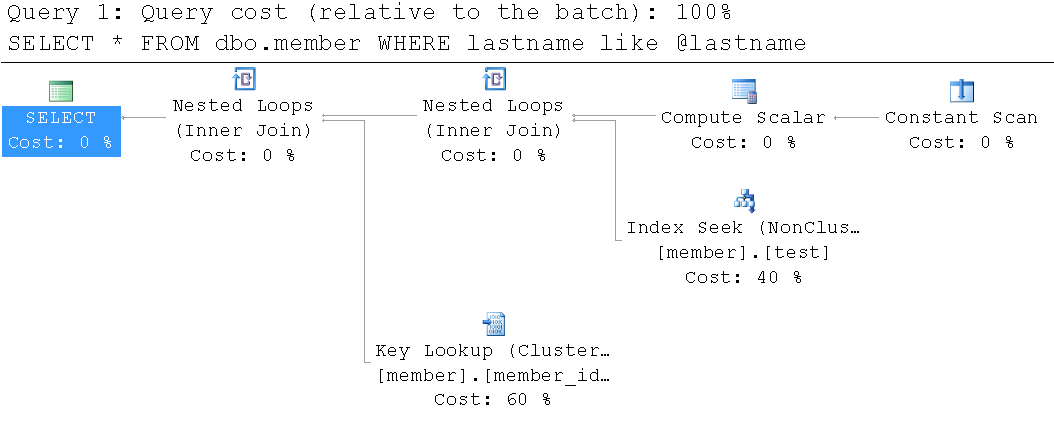

Because ‘Tripp’ is a highly selective name, SQL Server uses an index to the lookup of the data:

So, for our next execution, I’ll supply a different lastname – a lastname of Anderson. In this database, Anderson is NOT highly selective:

DECLARE @ExecStr NVARCHAR(4000);

SELECT @ExecStr = ‘SELECT * FROM dbo.member WHERE lastname LIKE @lastname’;

EXEC sp_executesql @ExecStr, N’@lastname varchar(15)’, ‘Anderson’;

GO

However, the query plan looks exactly the same:

Or does it? It turns out that this query plan does look a tiny bit different but it’s not very obvious… it’s in the thickness of the lines. In this second execution it clearly shows that more data is being passed between the steps. But, is this a bad thing? Maybe, maybe not. Let’s drill in a bit deeper. If I hover over the Index Seek (on member.test), I can see the following tooltip:

The key point here is that it shows an “Estimated Number of Rows” of 1.96 but an “Actual Number of Rows” of 385. That’s pretty far off… why? Because this statement’s plan was determined by the first execution of sp_executesql. Let’s try another execution.

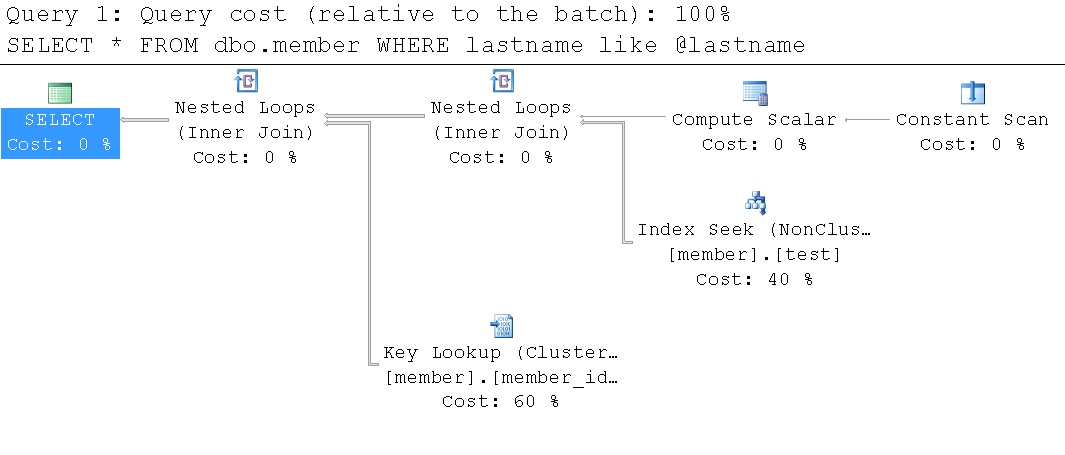

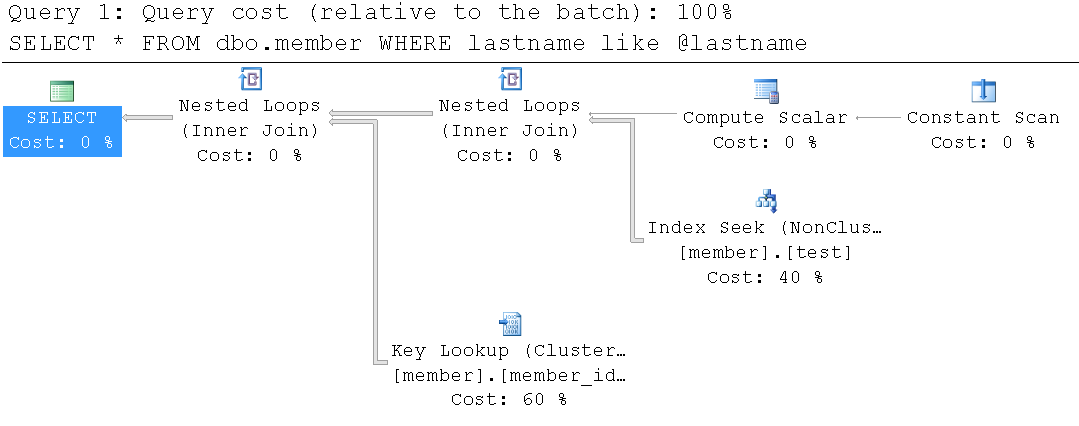

Because the query has LIKE in it, we can use wildcards. And, let’s do that! This time I’ll supply a wildcard of %e%:

DECLARE @ExecStr NVARCHAR(4000);

SELECT @ExecStr = ‘SELECT * FROM dbo.member WHERE lastname LIKE @lastname’;

EXEC sp_executesql @ExecStr, N’@lastname varchar(15)’, ‘%e%’;

GO;

And, again, the query plan looks the same… but with even thicker lines. Yes, I realize… this is not blindingly obvious:

And, by turning on SET STATISTICS IO ON, we can also review the [Logical] IOs performed:

For ‘Tripp’

- Table ‘member’. Scan count 1, logical reads 8, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

For ‘Anderson’

- Table ‘member’. Scan count 1, logical reads 772, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

For ‘%e%’

- Table ‘member’. Scan count 1, logical reads 10019, physical reads 0, read-ahead reads 0, lob logical reads 0, lob physical reads 0, lob read-ahead reads 0.

This table only has 144 pages. In the cases of ‘Anderson’ and ‘%e%’, SQL Server would have been better off doing a table scan. But, because I used sp_executesql I forced SQL Server to do caching. Subsequent users use the plan whether it’s optimal or not.

And, there are even more complicated scenarios than this. I plan to keep tackling these issues over the next few days and I’ll add quite a bit more to this. However, it’s late. So, I’ll dangle the carrot for now. Within the next couple of days, I’ll show a series of EXEC statements that generate both SAFE and UNSAFE plans.

Thanks for reading!

kt

6 thoughts on “EXEC and sp_executesql – how are they different?”

I think that saying that sp_executesql "forces" a plan to be cached when comparing it with EXEC is a bit misleading. For both methods a plan will be cached (or perhaps not, for non-parameterized queries, depending on the "optimize for ad hoc" setting). The real difference is whether a parameterized plan will be cached. sp_executesql, given a non-parameterized query, has the exact same caching characteristics as EXEC, and if the optimizer is able to parameterize the query there will be no recompile.

Thanks for the post, explains the difference well. Those pesky lines in the query plan can be difficult to see at times — caught me a couple of times. Presumably the sp_executesql would honor an OPTIMIZE FOR hint if you knew a good value to use? Or perhaps an OPTIMIZE FOR UNKNOWN? But I hate using those.

BTW, why are the images so large (wide)?

Kimberly,

what happens if you use EXEC sp_executesql… WITH RECOMPILE ? would that force recompilation of the query plan?

Most importantly EXEC runs in its own process space

Hey there everyone – I’ve had a pretty intense week and I’m a bit behind on replying to all of these comments (I just even approved all of them). Anyway, I still have *a lot* more to post on this subject but let me address a few things here:

@Adam-What I see more than anything are parameterized queries that when executed with sp_executesql are cached – and are essentially forced. And, they work just like stored procedures in that their plan is determined by the first execution. As a result, you can end up with a bad plan getting into cache and then being reused for subsequent executions. And, for the *EXACT* same statement executed with EXEC, SQL Server will ONLY parameterize and store a plan if it deems that plan as safe. For complex plans and those that have parameters that can effect optimization – EXEC will NOT save the plan. I have seen servers almost fall over because of poorly written procs and procs written with sp_executesql. The solution that ALWAYS works is a conversion to EXEC. Believe me, I still have a few more things to post and I’ll show a few exact examples where sp_executesql does NOT work well and EXEC shines! ;-)

@dm_unseen – Great question and that’s going to be my next comment/post. I could optimize this by using WITH RECOMPILE but I would suspect that most client-side apps aren’t really doing this and ultimately this would be better off in a stored procedure where a backend database developer would (and should be) more aware of the recompilation options. And, a good point to make as well is that while WITH RECOMPILE will work for this statement, there are others (and I promise – these are coming up soon!) that will NOT work when you just add WITH RECOMPILE. So, it’s a VERY good solution and does work really well for more simplified statements, it doesn’t always work. (ugh, this is going to be a long series :) :).

@o0osangamo0o – While I suspect that you are a SPAMBOT, I’ll at least comment that if you have constructive recommendations I’ll all for hearing them. Oh, and we take cash, check, and all major credit cards ;-).

@Nitin – I’d like to hear a bit more about what you mean here. It’s not entirely accurate to say a separate process space but I think you’re referring to the fact that things that like variables are not visible outside of the "scope" of the DSE/sp_executesql. However, there are some tricks here. You can build a statement and execute it with sp_executesql where you have defined one of your parameters as output. So, there are ways to get things in/out.

Again, sorry for my delay. I do hope to get another post on this soon.

Thanks!!

Kimberly

You are correct,I was referring to Variables not available. Looking forward to those tips/tricks

Thank You