This month the SQLskills team is presenting a series of blog posts aimed at helping Accidental/Junior DBAs ‘keep the SQL Server lights on’. It’s a little taster to let you know what we cover in our Immersion Event for The Accidental/Junior DBA, which we present several times each year. You can find all the other posts in this series at http://www.SQLskills.com/help/AccidentalDBA. Enjoy!

So far this month, Glenn has done an awesome job talking about the hardware considerations for SQL Server in a physical implementation including CPU and memory considerations, disk configuration, and considerations for SSD usage. Glenn also covered installation and configuration best practices for SQL Server as well.

In today’s post I’m going to discuss specific considerations for running SQL Server in a virtualized configuration. One of the most important things to keep in mind is that the CPU, memory, and I/O requirements for SQL Server in a virtual world are generally no different than in the physical world, so I recommend reading Glenn’s earlier posts before reading this one.

Check It Out!

SQLskills Complete Short Course Bundle

$4,575.00 Original price was: $4,575.00.$899.00Current price is: $899.00.

Reasons for Virtualizing

There are a number of factors that motivate businesses to virtualize line-of-business applications like SQL Server, but in general the main factor is that utilizing virtualization is generally cheaper overall than having a number of physical servers. “Cheaper” can mean a lot of different things. For example, cheaper can mean fewer licenses, less physical rack space, lower power consumption, and lower cooling costs.

Cheaper can also mean less hardware is used, which can translate into performance problems for SQL Server depending on the configuration being used. I typically see three major problems over and over when consulting and dealing with SQL Server virtual machines (VMs):

- Incorrect I/O sizing for the workload based on capacity and not performance requirements in IOPS (I/Os per second) or MB/sec transfer rates.

- Overcommitment of memory under VMware where no memory reservations have been set for the SQL Server VM to prevent “ballooning”.

- Overcommitment of host resources for CPU.

I’m going to explain each of these problems in a bit more detail to help you out if you’re an Accidental DBA with responsibility for SQL Server in a virtual environment.

VM I/O Considerations

Of all the problems that can affect SQL Server VM performance, the ones that I dread seeing is are I/O subsystem sizing problems, as these are the hardest to fix.

Typically when I come across SQL Server VMs that have these issues, it is the result of the I/O subsystem having been sized only on storage capacity, with a view to minimizing costs. Quite often this sizing technique results in a small number of high capacity, slow rotational speed disks, combined with 1-Gb/sec iSCSI for connectivity to the SAN.

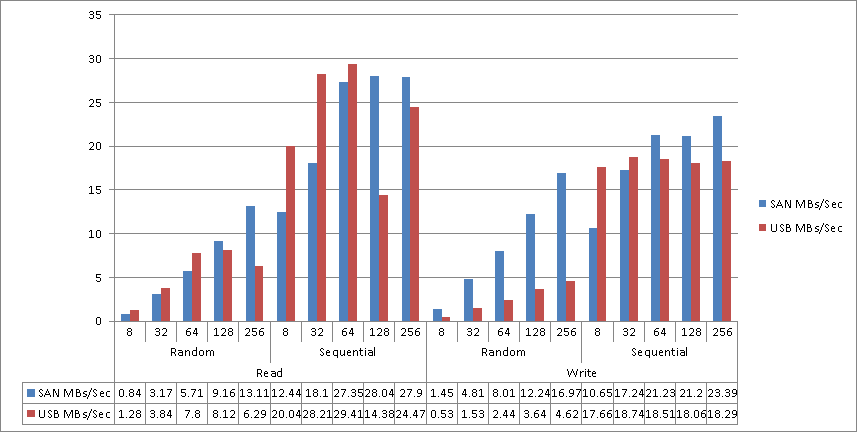

In the worst case I’ve seen consulting, the underlying I/O subsystem was built using six 1-TB, 7200-RPM disks in a NetApp SAN. After running a round of SQLIO (a free tool from Microsoft that allows I/O subsystem performance testing) tests on that SAN, followed by another round of tests to validate the very poor results I was seeing, I decided to run comparison tests against a USB-2, 5400-RPM external disk that I used at the time for backups of important files on my laptops. The results of these SQLIO tests are shown below:

The vertical axis is MBs/second and the horizontal axis lists the I/O size being tested in KB. As you can see, my external USB drive came really close to this company’s entire SAN for performance!

For the NetApp SAN, it turned out that the company’s entire data center was virtualized on this same SAN. For application VMs this type of setup might work reasonably well, or at least you won’t see the effects of the poor I/O configuration as strongly as you will for a SQL Server workload (which is generally a much heavier I/O workload). Additionally, the SAN was connected using 1-Gb/sec iSCSI with only 4 network paths to the storage, and these paths were shared by three different VM hosts, servicing all of the I/O demands of all of the VMs.

There is no SAN magic in the world that can beat the physical limitations of an improperly sized I/O subsystem that has to service all of the I/O demands of a heavily virtualized environment.

SQL Server I/O requirements should be expressed in terms of performance and not just capacity, with the expectation that in order to obtain the best performance there will be unused capacity in the configuration. You also need to consider the throughput requirements in MB/sec, and whether or not the available network connections to the SAN will be able to meet those requirements for your workload. In general each 1-GB/sec will work out to between 90 to 110-MB/sec of throughput (nominally it is actually 128-MB/sec but you’ll rarely achieve this, in my experience) if the underlying configuration for the storage can also meet that level of performance.

VM Memory Considerations

SQL Server is a memory-dependent application. In fact, large amounts of memory for SQL Server can often hide underlying I/O subsystem inadequacies by allowing more data to be cached in memory, thereby reducing the read I/O associated with the server workload.

However, one of the problems with running SQL Server in a VM is that the memory allocated to the VM might not be available if the host is over-subscribed (the sum of all the memory allocated to the various VMs is more than that available on the physical host).

VMware and Hyper-V have different approaches for VM memory allocations. VMware allows for over-provisioning of memory on a host by default, whereas Hyper-V performs admission checks at VM startup to ensure that the memory is going to be available for the VM. The exception to this in Hyper-V is when Dynamic Memory has been configured for the VM, which then performs the admission check for the startup memory configuration for the VM and allows the memory usage to grow to the maximum memory setting. Under Hyper-V, the VM also has a minimum memory configuration value which specifies the minimum size the VM will be allotted if memory pressure on the host occurs. Under VMware, the VM tools install a Balloon Driver, which is designed to reclaim memory from VMs if the host is over-provisioned and under memory pressure.

Since SQL Server is a very memory dependent application, neither Dynamic Memory or ballooning is generally desirable for high performance configurations. VMware does allow the configuration of static memory reservations to prevent ballooning of individual VMs and when memory over-commit is in use for a host, the recommended best practice from VMware is to set memory reservations for low latency applications like SQL Server. Make sure that memory pressure is not causing a performance problem for your SQL Server VMs.

VM CPU Considerations

As a general rule, VMs should be configured with the lowest number of virtual CPUs (vCPUs) required to meet the application workload demands. For SQL Servers, you should also consider the impact that a reduced number of vCPUs might have on the query workload when parallel query execution is common. However, it is not uncommon for “wide” virtual machines (with a high number of vCPUs) to run into scheduling problems when mixed with other “narrow” virtual machines (with a small number of vCPUs) on the same host. The problem occurs because the physical CPUs are being shared in time slices with the other VMs that are also running on the same host.

While the virtual machine appears to the guest OS (the OS that’s running in the VM) as if it has 4 processors, the virtual processors might not be scheduled equally inside of the available time slices for the physical processors if there is concurrent activity in other VMs. To prevent problems associated with skew accumulation across the vCPU scheduling (i.e. vCPUs not being scheduled), the hypervisors (the process that manages the VMs running on the physical host) will periodically co-schedule the vCPU execution, requiring the same number of physical CPUs as vCPUs be made available so the wide VM can be scheduled to its workload can progress. VMware implemented a relaxed co-scheduling algorithm that has changed over time to only require co-scheduling of the vCPUs that have skewed, instead of requiring all vCPUs to co-schedule, but under parallel workloads in SQL Server, this could still correlate to full co-scheduling of the VM.

What all this means is that a SQL Server workload in a wide VM may stall periodically waiting for processors to become available.

This is a common occurrence that I see with SQL Server VMs: a specific workload is hitting performance problems with CPU, generally measured using the % Processor Time within the VM (which only represents the VM’s usage of the allocated resources and not actual physical processor usage), and the number of vCPUs is increased, which results in even more performance degradation.

When this occurs, reducing the number of vCPUs often improves performance. When co-scheduling issues exist, either as a factor of host over-commitment, or mixing “wide” virtual machines with smaller ones, this is usually the only option aside from adding another physical host to the overall configuration to try to balance out the workload. In VMware, CPU Ready time, can often be a sign of CPU issues for VMs and is something that I generally track to determine if a VM is having scheduling problems inside of the hypervisor.

Summary

Virtualizing SQL Server workloads is becoming more common in many companies, and is even supported by Microsoft (see KB article 956893) if the host configuration meets all their documented requirements. With the appropriate considerations around workload sizing and hardware provisioning, SQL Server VMs can deliver the required performance for many line-of-business uses. However, when cost cutting is the driving reason behind server virtualization, the resulting configurations can be problematic for SQL Server performance. With the information I’ve provided in this post, you now know the three main areas that can cause performance problems in SQL Server VMs.

Our online training (Pluralsight) courses that can help you with this topic:

6 thoughts on “The Accidental DBA (Day 5 of 30): Virtualization Considerations”

I have been looking for some specific backgroundinformation about virtualised SQL server environments. This is great stuff. Thanks for posting!

Jonathan,

This is helpful info.

Do you plan to share more about the techniques you use to find I/O, RAM and CPU problems?

Thanks,

Mark

Hey Mark,

I just recorded a 4hr course on SQL Server Virtualization including demos of common problems in both VMware and Hyper-V and how to identify and resolve them.

http://www.pluralsight.com/training/Courses/TableOfContents/sql-server-virtualization-planning-practice

Cheers!

Thank you!!